Content from Introduction to R and Rstudio

Last updated on 2023-01-24 | Edit this page

Overview

Questions

- How do I use the RStudio IDE?

- What are the basics of R?

- What are the best practices for writing R code?

Objectives

- Understand how to interact with the RStudio IDE

- Demonstrate some R basics: operators, comments, functions, assignment, vectors and types

- Be aware of some good habits for writing R code

Why R?

The R language is one of the most commonly used languages in bioinformatics, and data science more generally. It’s a great choice for you because:

- Reproducibility: Scripting your analysis makes it easier for someone else to repeat and for you to re-use and extend

- Power:

Rcan be used to work with datasets larger than you can in Prism or Excel - Open source: So it’s free!

- Community:

Ris a popular choice for data science, so there are many resources available for learning and debugging - Packages: Since the community is large, many people have written helpful packages that can help you do tasks more easily than if you’d had to start from scratch

The other language most commonly used for data science is python. Some people might even consider it a better choice than R, because it doesn’t have some of the strange quirks that R does, and also has a large community of users (and all of the benefits that come with it).

I use both R and python, and most (but not all) people who do a lot of data science know how to use both. But today we’re learning R because I think it usually what I turn to when someone in the lab asks me for help analysing their data, and I think everyone can learn to use it for those same tasks too.

I made all of the material for this course (including the website and slides) in Rstudio using R. A little bit can take you a long way!

There’s more than one way to skin a cat

This course will teach a particular ‘dialect’ of R called the tidyverse. This is a collection of packages mostly written by Hadley Wickham, that focus on the concept of ‘tidy’ data.

You’ll soon discover that when coding, there are many ways of getting to the same output. Some of them are more efficient than others, and some of them are just easier to code than others. The tidyverse packages are hugely popular in the R community, and when I started I found them easy to work with.

However, if you stick with R long enough, you’ll probably end up needing to learn some additional base R (particularly if you use packages from outside the tidyverse). If you want to get a head start, I’ll include some links to resources at the end of this lesson.

Do I need to do this lesson?

Since we have a variety of skill levels and experiences in this course, every lesson will have a challenge at the start to check if the lesson contains anything new for you. If you can solve it, then you can skip the lesson.

Predict what will happen after every section of the following script (without running it in R).

Also be able to answer the question: what is a comment?

R

# section 1

100 ** 1

# section 2

a <- 4

b <- 3

a + b

# section 3

round(3.1415, digits=2)

# section 4

library(stats)

# section 5

stringr::str_length("what is the output?")

# section 6

as.integer(c(1.96,2.09,3.12))

# section 7

as.numeric(c("one", "two", "three"))

A comment is piece of text where each line starts with a hash (#). These are used to annotate code.

R

# section 1

100 ** 1

OUTPUT

[1] 100100 to the power of 1 is 100

R

# section 2

# assign the value 4 to variable a

a <- 4

# assign the value 3 to variable b

b <- 3

# add the values of a and b

a + b

OUTPUT

[1] 74 + 3 is 7

R

# section 3

round(pi, digits=2)

OUTPUT

[1] 3.14Using the round() function to round \(\pi\) to two decimal places gives the result 3.14

R

# section 4

library(stats)

No output, just loading the stats library.

R

# section 5

stringr::str_length("what is the output?")

OUTPUT

[1] 19You might need the help function for this one - there are 19 characters in the string.

R

# section 6

as.integer(c(1.96,2.09,3.12))

OUTPUT

[1] 1 2 3Coercing a vector of type double to integers truncates numbers to give integers.

R

# section 7

as.numeric(c("one", "two", "three"))

WARNING

Warning: NAs introduced by coercionOUTPUT

[1] NA NA NAThis one is tricky! Trying to coerce a string that isn’t only digits just results in NA values.

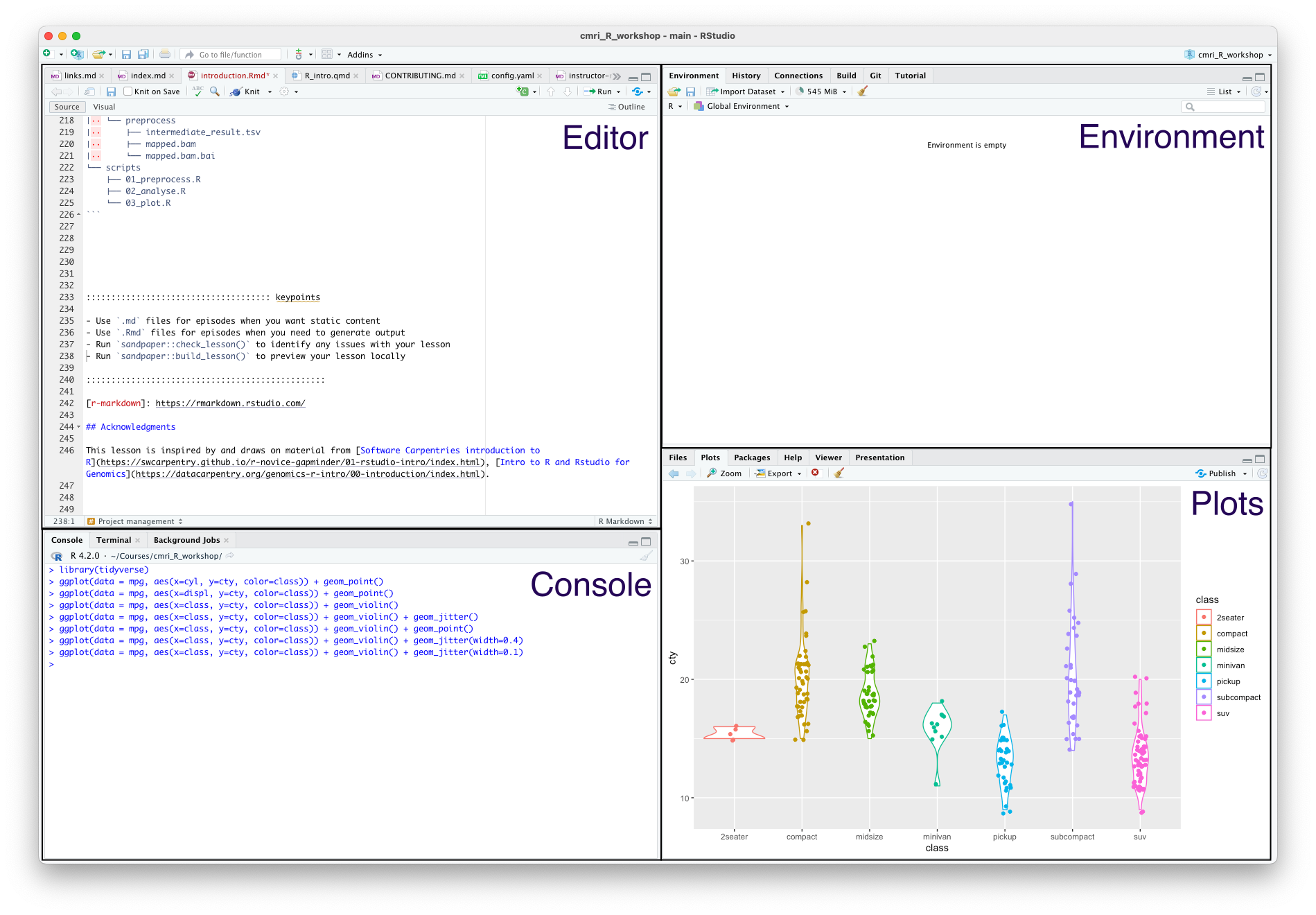

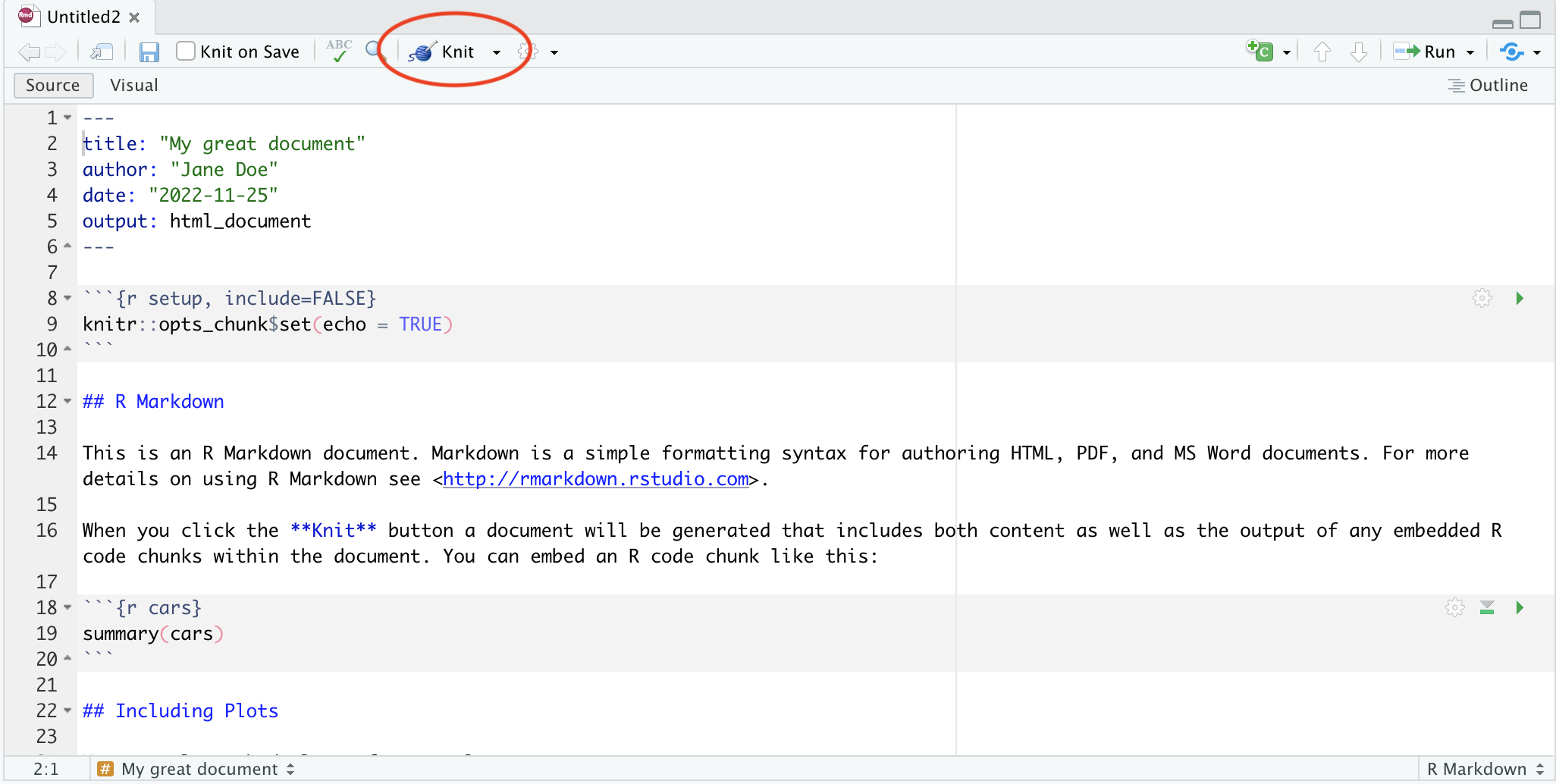

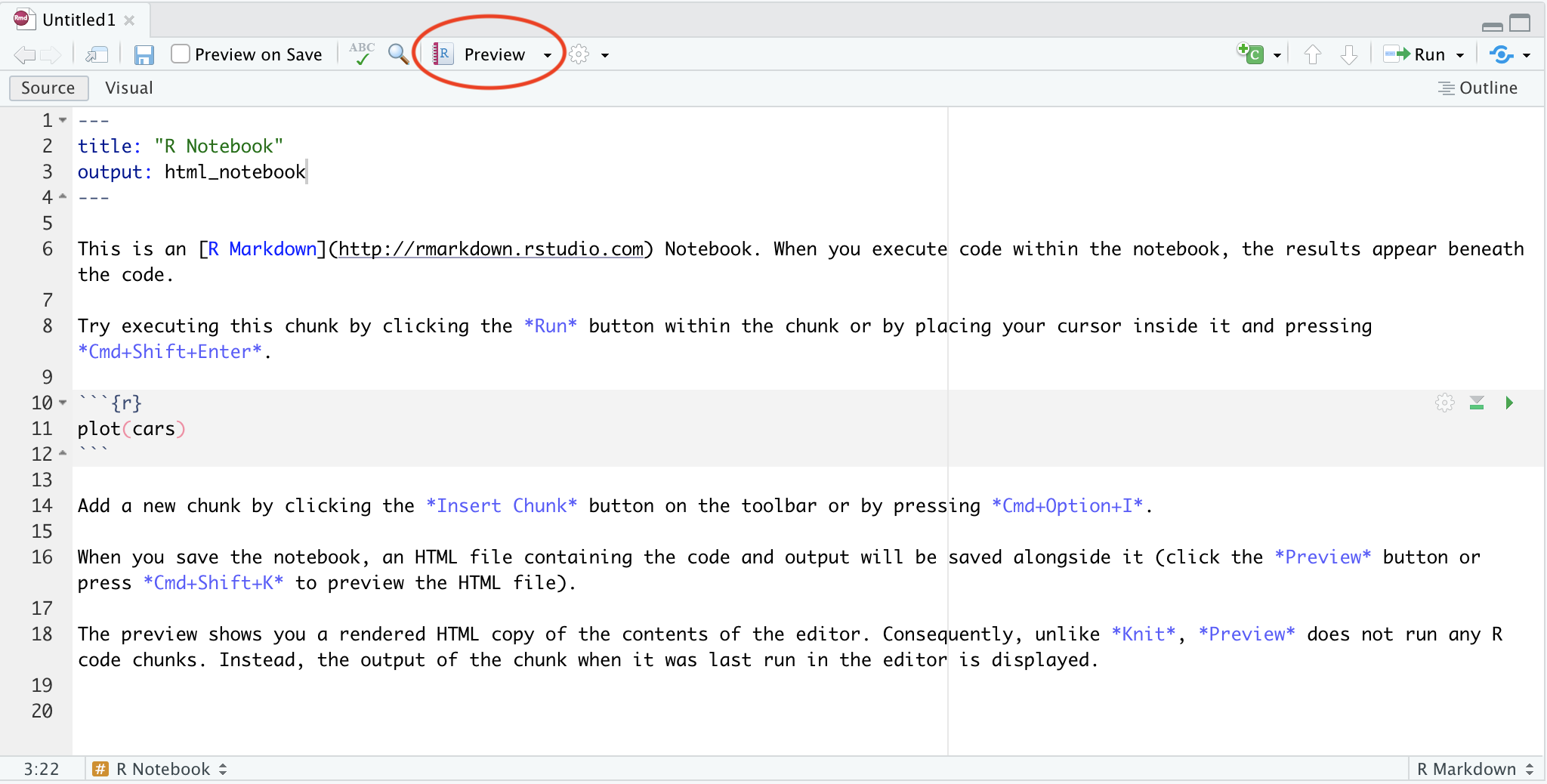

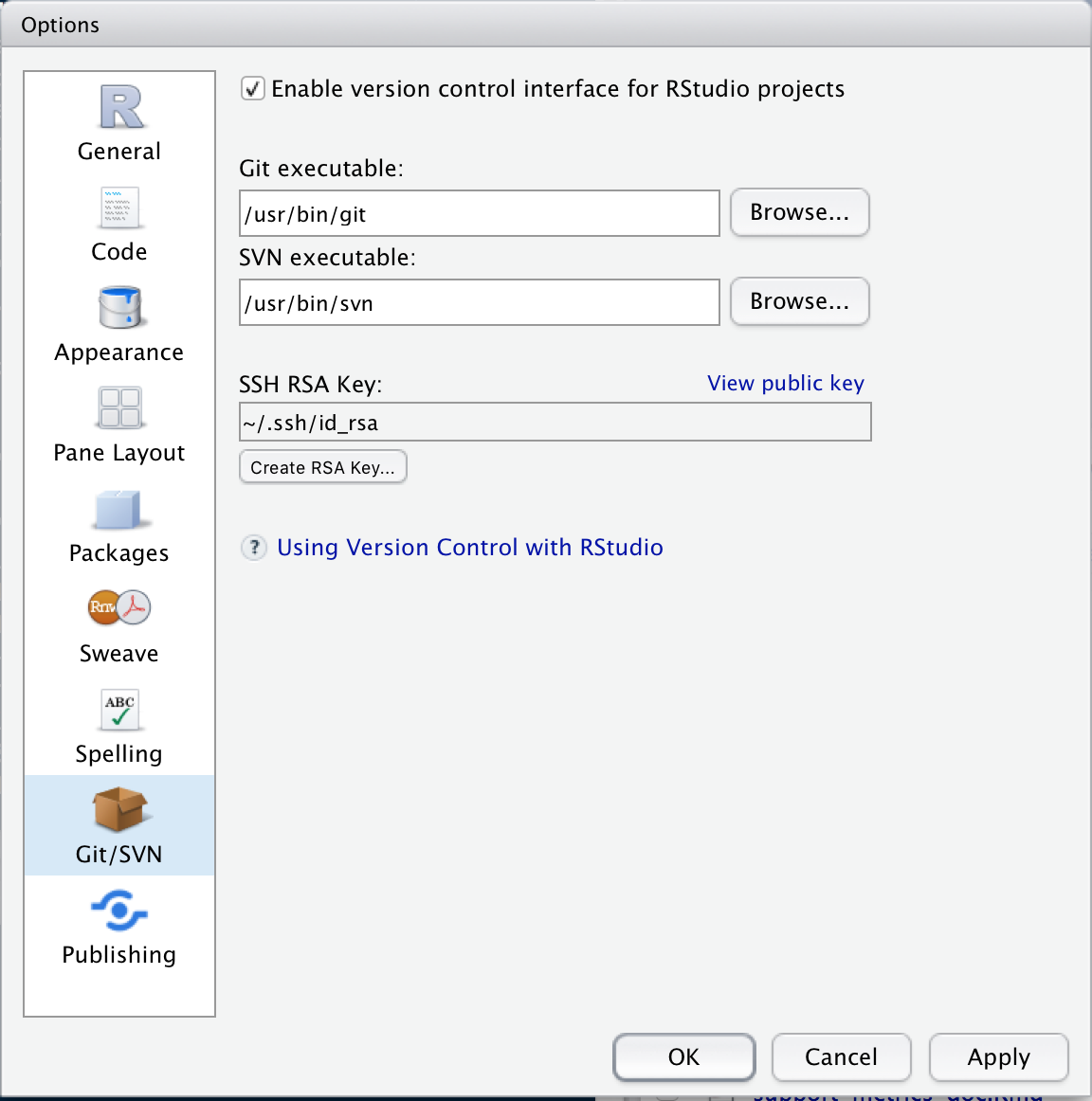

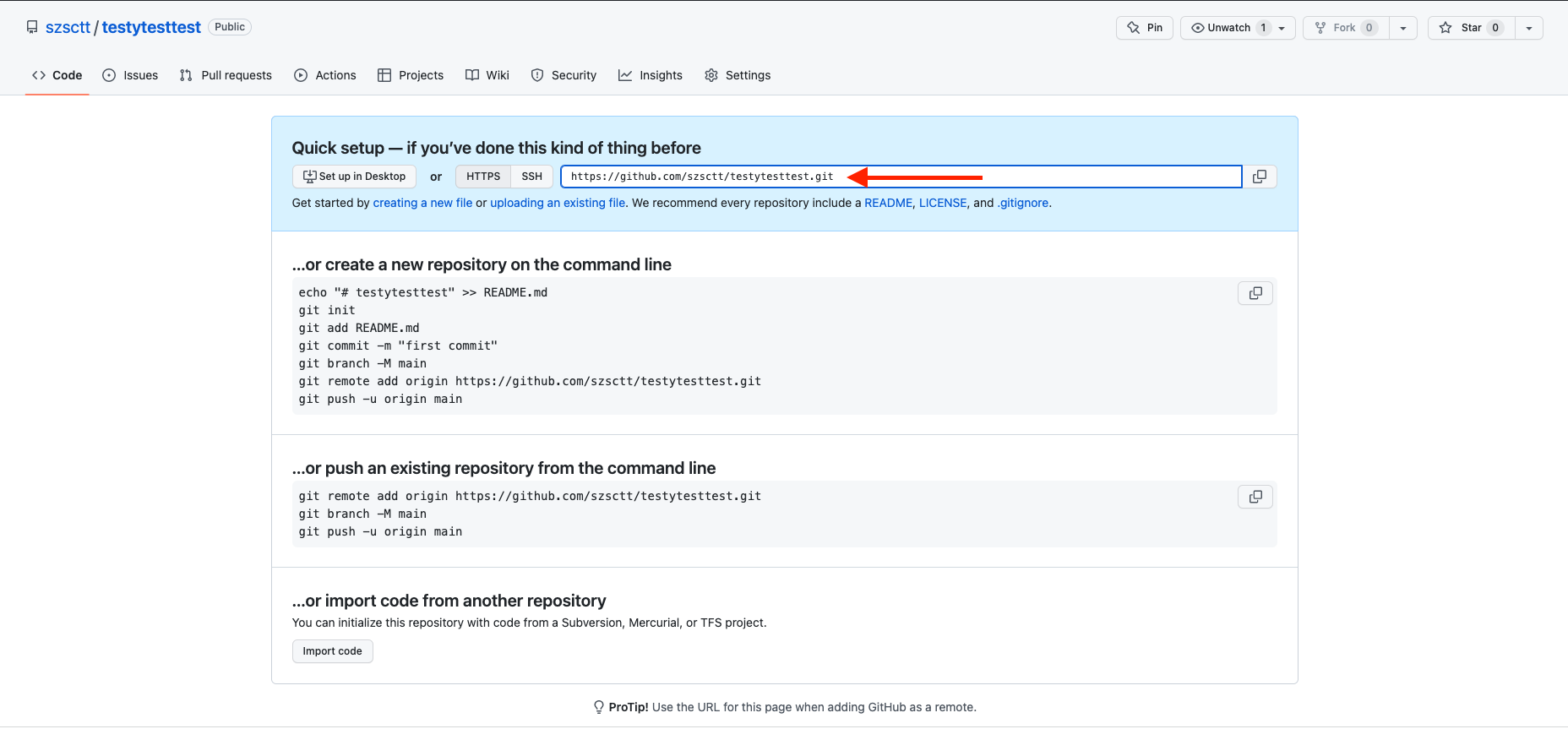

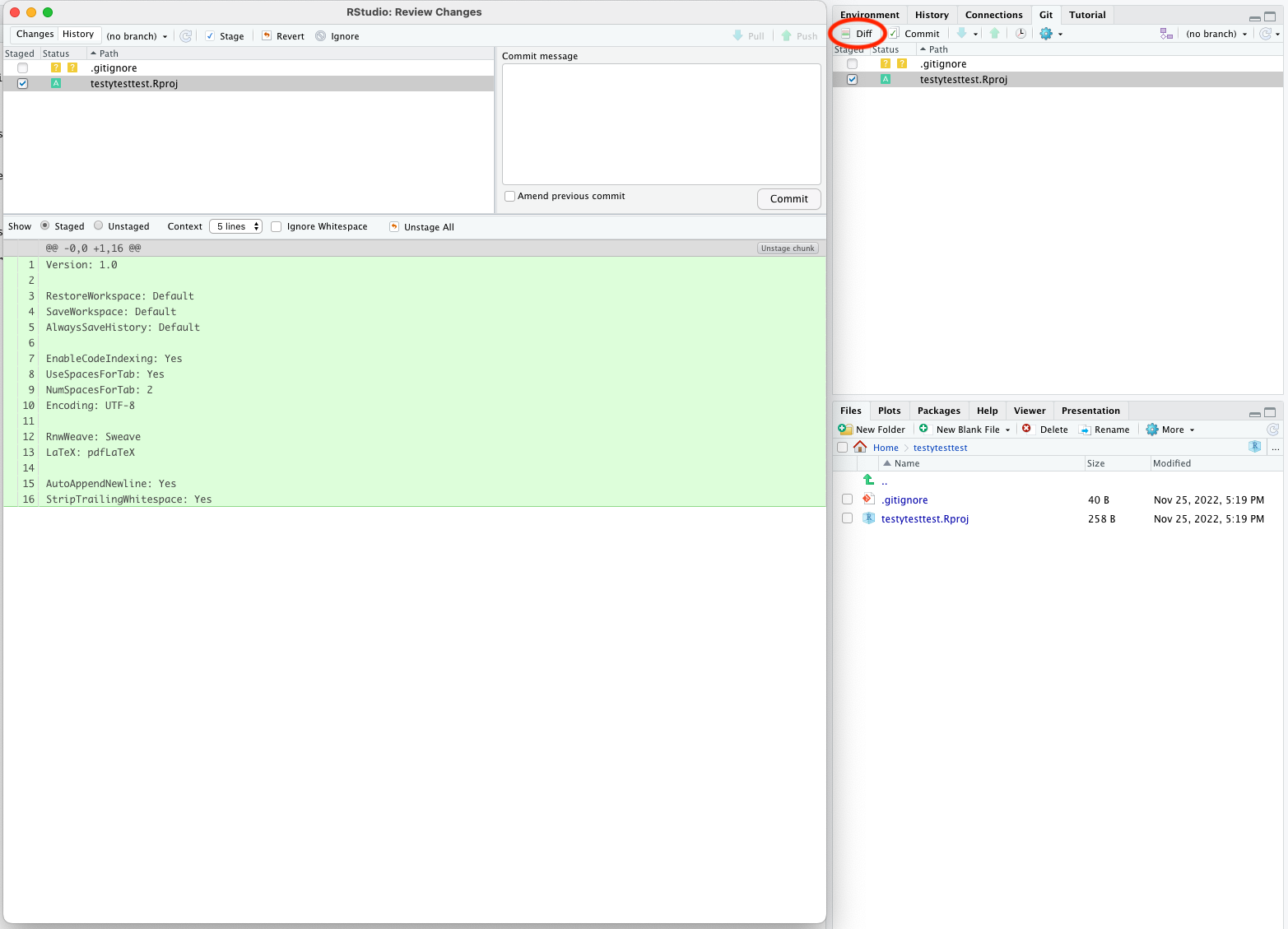

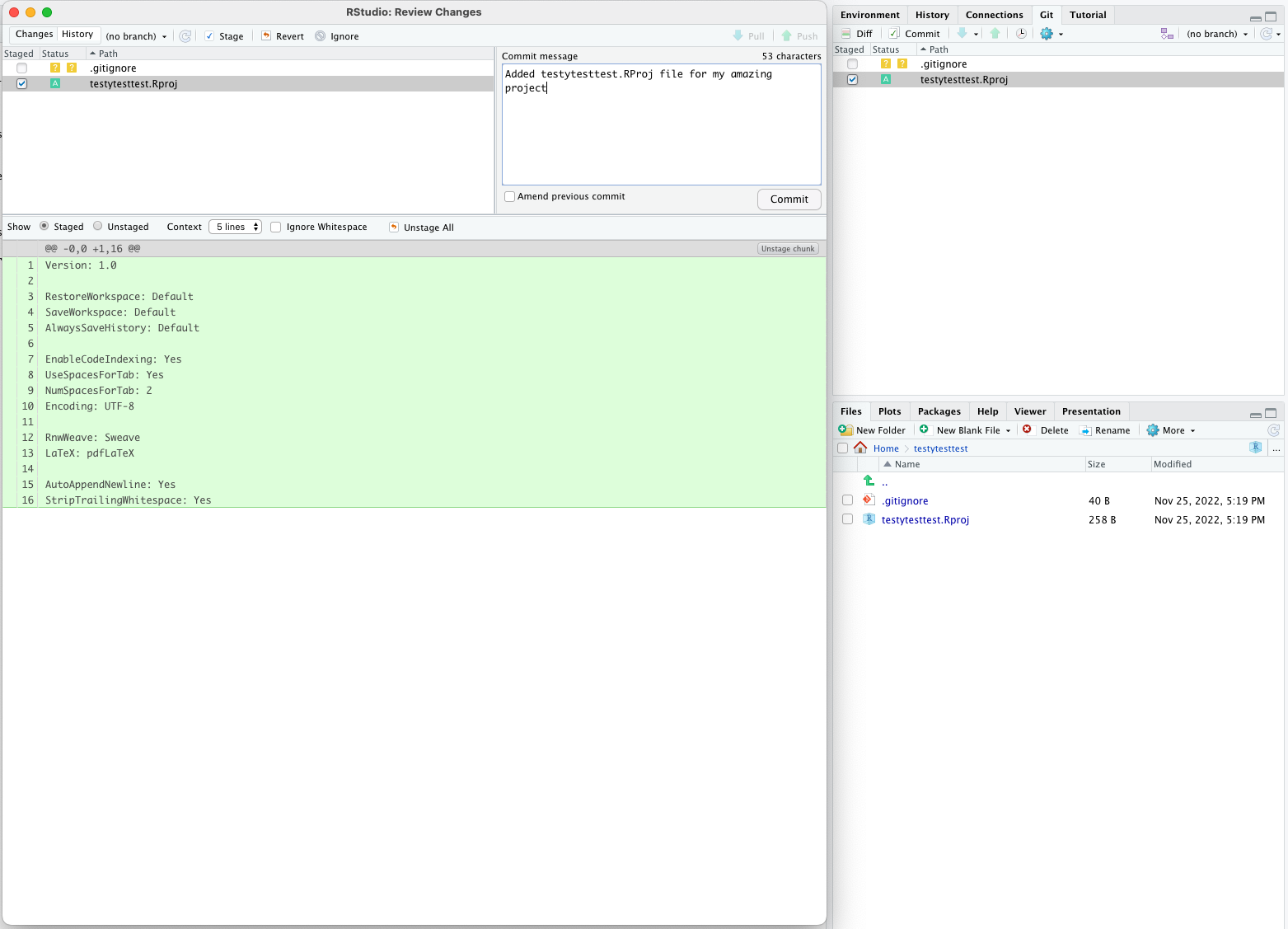

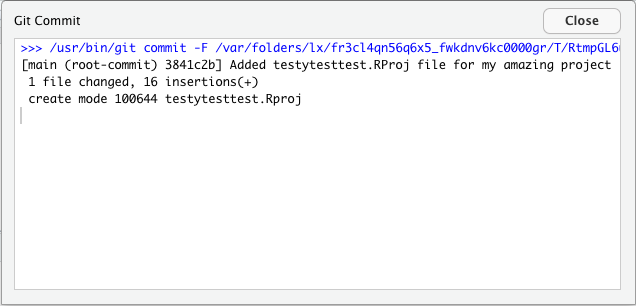

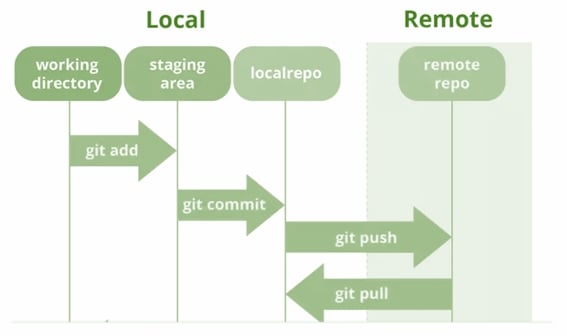

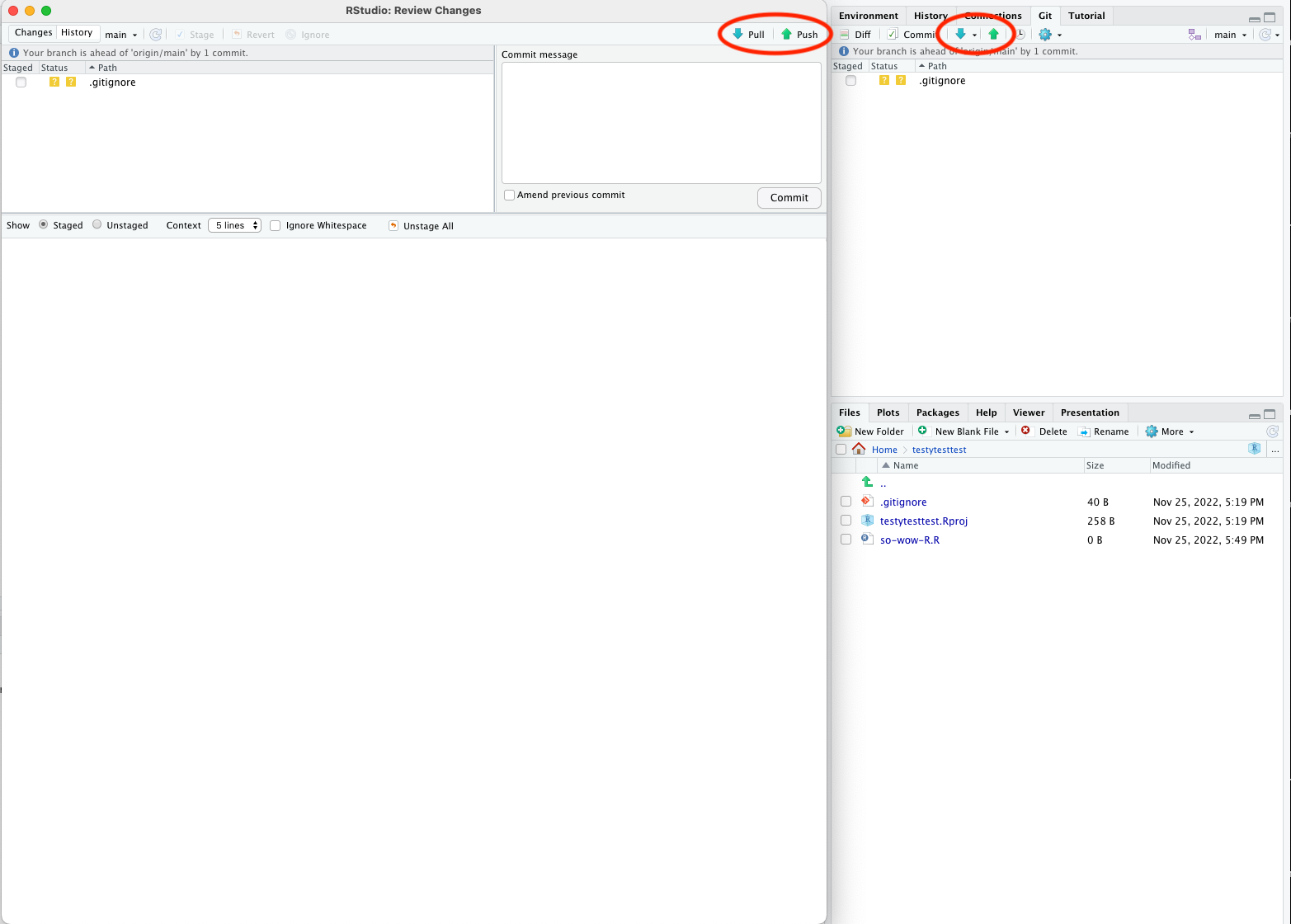

Introduction to RStudio

RStudio is an integrated development environment that makes it much easier to work with the R language. It’s free, cross-platform and provides many benefits such as project management and version control integration.

When you open it, you’ll see something like this:

The four main panes are:

- Top left: editor. For editing scripts and other source code

- Bottom left: console. For running code

- Top right: environment. Information about objects you create will appear here

- Bottom right: plots (among other things). Some output (like plots) from the console appears here. Also helpful for browsing files and getting help

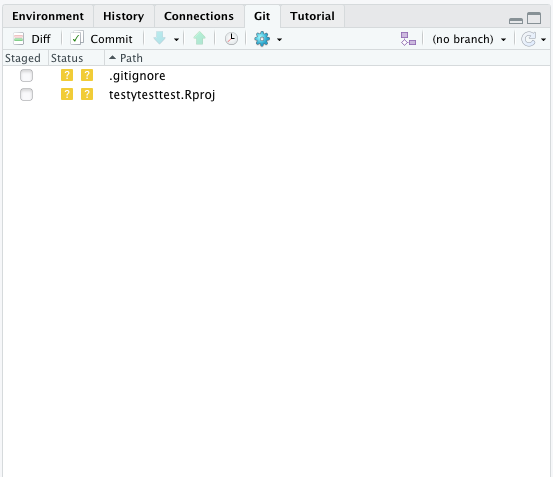

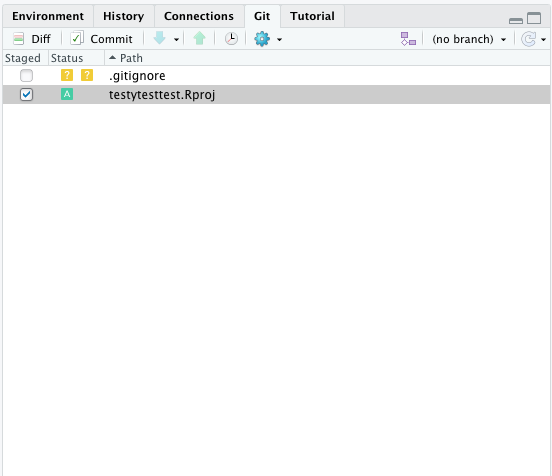

Organizing your work into projects

When you work in Rstudio, the program assumes that you’re working in a project. This is a directory that should contain all the files that you’ll need for whatever project you’re currently working.

If you’ve made a fresh install then by default you won’t be in a project. Otherwise, the current project will be the last one you opened.

Let’s make a new project for working in for this course. In the menu bar, click File > New Project, and decide if you’d like to start the new project in a New Directory or an Existing Directory, and then tell Rstudio where you want your project directory to be located.

Using the editor

The editor pane won’t appear in a new project, so open it up by making a new script. In the menu bar, click File > New File > R Script.

Although you can also type commands directly into the console pane (below), keeping your commands together in a script helps organise your analysis.

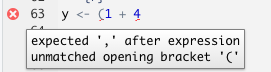

The editor also helps you identify issues with your code by placing a cross where it doesn’t understand something.

Hover over the cross to get more information

Using the console

The console is where you can type in R commands and see their output.

You can type R commands directly in here after the prompt (>). This prompt disappears while the code is running, and re-appears when it is done. To see this, try typing the following line of code into your console:

R

Sys.sleep(5)

The prompt disapers for five seconds, then comes back.

Rather than typing directly into the console, you can ‘send’ a line of code from the editor to the console by pressing Ctrl + Enter.

You can also run the whole script with Ctrl + Shift + Enter.

As a calculator

The most basic way we can use R is as a calculator:

R

1 + 1

OUTPUT

[1] 2If you type an incomplete command, you’ll see a +:

R

1 +OUTPUT

+Finish typing the command to get back to the prompt (>).

The order of operations is as you would expect:

- Parentheses

(,) - Exponents

^or** - Multiply

* - Divide

/ - Add

+ - Subtract

- - Modulus

%%

R

3 / (3 + 6)

OUTPUT

[1] 0.3333333You can also use R for logical expressions, like:

R

3 > 5

OUTPUT

[1] FALSEThe logical operators you’ll most likely use are

- Equal

== - Less than

< - Less than or equal to

<= - Greater than

> - Greater than or equal to

>=

You can also combine multiple logical statements, for example,

R

(3 > 5) | (4 < 5)

OUTPUT

[1] TRUEFor this, we use the operators:

- Logical or

| - Logical and

&

Writing R code

Next, we’ll look at some of the building blocks of R code. You can use these to write scripts, or type them directly into the console.

When performing data analysis, it’s best to record the steps you took by writing a script, which can be re-run if you need to reproduce or update your work.

Comments

The first building block is actually lines that are ignored by R: comments. Anything that comes after a # character won’t be executed. Use this to annotate your code.

R

# this is a comment

1 + 2 # this is also a comment

OUTPUT

[1] 3R

# the line below won't be executed

1 + 1

OUTPUT

[1] 2Comments is very important!!! A key part of reproducibility is knowing what code does. Make it easier for others and your future self by adding lots of comments to your code.

Assignment

If you want R to remember something, you can assign it to a variable:

R

my_var <- 1+1

print(my_var)

OUTPUT

[1] 2R

a <- 3

b <- 2

c <- (a * b) / b + 1

print(c)

OUTPUT

[1] 4Functions

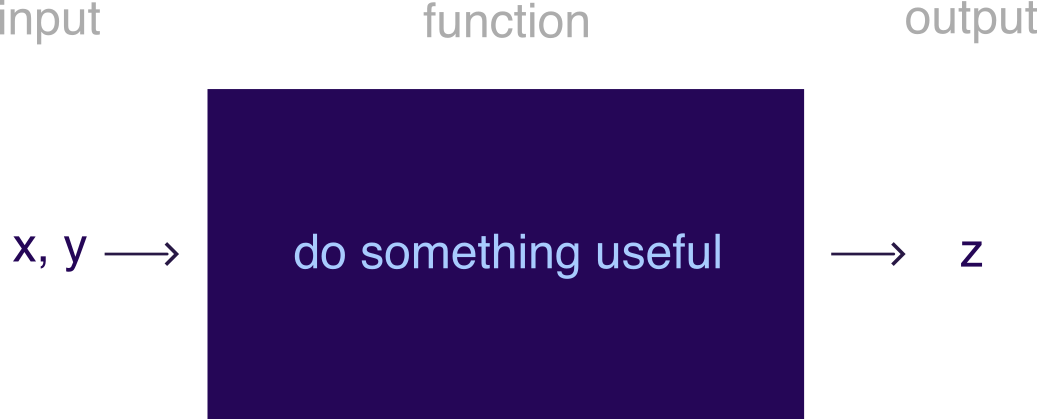

A function is just something that takes zero or more inputs, does something with it, and gives you back zero or more outputs.

R comes with many useful functions that you can use in your code. A function is always called with parentheses ().

For example, the getwd() function takes no inputs, and returns the current working directory.

R

# a function that takes no inputs

getwd()

Base R also has many mathematical and statistical functions:

R

# natural logarithm

log(1)

OUTPUT

[1] 0R

# rounding

round(0.555555, digits=3)

OUTPUT

[1] 0.556R

# statistical analysis

sample1 <- c(1, 2.5, 3, 1, 1.3, 4.6)

sample2 <- c(1000, 1001, 3000, 5000, 2022, 4000)

t.test(sample1, sample2)

OUTPUT

Welch Two Sample t-test

data: sample1 and sample2

t = -4.0073, df = 5, p-value = 0.01025

alternative hypothesis: true difference in means is not equal to 0

95 percent confidence interval:

-4379.9127 -956.6206

sample estimates:

mean of x mean of y

2.233333 2670.500000 You don’t need to memorize all these functions: Google is your friend.

RStudio can also help you with function usage. If you know what the name of a function is but can’t remember how to use it, you can type ? before the function name in the console to get help on that function.

R

?sin

If you don’t know what the name of a function is, you can type ?? before a key word to search the documentation for that key word.

R

??trig

Named arguments

Function arguments can be named implicitly or explicitly. When calling a function, explicitly named arguments are matched first, then any other arguments are matched in order.

For example, the documentation for the round function gives its signature: round(x, digits = 0).

The following function calls are all equivalent:

R

round(1/3, 3)

round(1/3, digits=3)

round(x=1/3, digits=3)

round(digits=3, x=1/3)

However, the last is not best practice: reversing the order of the arguments will likely be confusing for someone else reading your code.

In general, try to match the documentation when you call a function.

Which of the calls above matches the documentation for round()?

You can also write your own functions:

R

# function to add two numbers

my_add <- function(num1, num2) {

return(num1 + num2)

}

my_add(1,1)

OUTPUT

[1] 2R

?rnorm

R

rnorm(5, mean=1, sd=2)

OUTPUT

[1] -1.2508197 0.8529519 1.4416727 1.2751230 0.2460887Nested function calls

You can also nest function calls inside each other, for example:

R

mean(c(1,2,3))

OUTPUT

[1] 2This is equivalent to:

R

nums <- c(1,2,3)

mean(nums)

OUTPUT

[1] 2R

t.test( c(1, 2.5, 3, 1, 1.3, 4.6),

c(1000, 1001, 3000, 5000, 2022, 4000)

)

OUTPUT

Welch Two Sample t-test

data: c(1, 2.5, 3, 1, 1.3, 4.6) and c(1000, 1001, 3000, 5000, 2022, 4000)

t = -4.0073, df = 5, p-value = 0.01025

alternative hypothesis: true difference in means is not equal to 0

95 percent confidence interval:

-4379.9127 -956.6206

sample estimates:

mean of x mean of y

2.233333 2670.500000 Notice that we can spread one ‘line’ of code over multiple lines of text. This can help with readability, by visually separating the two samples we’re comparing.

Using libraries

One of the great things about R is that a lot of other people use it, so often somebody has worked out an efficient way of doing things that you can use. This way, you don’t have to build your code from the ground up; instead you can use functions that other people have written.

When you use other peoples’ functions, they will be packaged in to libraries (also called packages) that you can import. In order to use library, it first must be installed. R provides the function install.packages() which you can use to install libraries from CRAN (an online repository of libraries).

R

install.packages("library_name")

Note the use of quotation marks around the library name - this tells R that this is a string, rather than a variable (more on strings in the next section).

R

install.packages("tidyverse")

Package-ception

Most of the packages that you’ll use will come from CRAN, but you might come across other sources of packages that are also useful. These are usually installed by packages that you can get from CRAN.

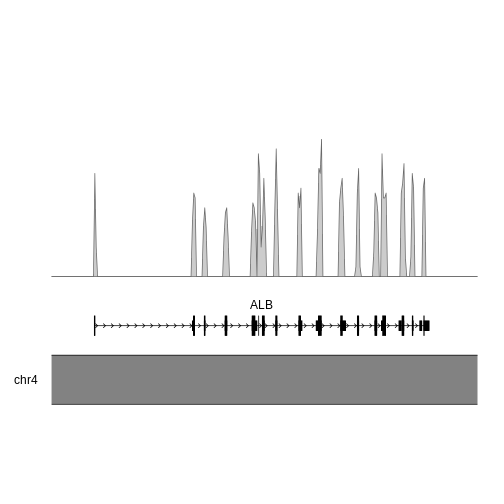

For example, the Bioconductor suite consists of packages that are useful for bioinformatics. To install any Bioconductor libraries, you’ll first need to install the BiocManager package from CRAN, and then use functions from this library to install Bioconductor packages.

R

# install BiocManager package

install.packages("BiocManager")

# use the install function from BiocManager to install the GenomicFeatures and karyoploteR libraries

BiocManager::install(c("GenomicFeatures", "karyoploteR"))

Another place you might install packages from is github. Many developers host their code for their package on github, and then release it to CRAN when they think it’s ready.

If you want to use the development version of a package (for example if you want to use a feature that hasn’t been released yet), you can get it directly from github using the devtools package. Be careful when you do this - you’ll get all the shiny newest features, but you might also run into new bugs that haven’t been fixed yet!

For example, if you want to install the development version of readr from github:

R

# install the devtools package

install.packages("devtools")

# use the install function from devtools to install readr

devtools::install_github("tidyverse/readr")

By default, the only functions that when you start up R are the base R functions. If you want to use any functions from libraries that you’ve installed, you’ll need to tell R which library they come from.

One way to do this is is to use the :: syntax: packagename::functionname(). For example, we can use the str_length() function from the stringr package as follows

R

stringr::str_length("testing")

OUTPUT

[1] 7R

str_length("testing")

ERROR

Error in str_length("testing"): could not find function "str_length"We get an error because R doesn’t know what the str_length() function is by default.

The other way to use functions from a library is to import all the functions in the library using the library() function. It’s best practice to put all the library() calls together at the top of your code, rather than sprinkling them throughout.

R

# import whole library

library(stringr)

# use the str_length function without ::

str_length("testing")

OUTPUT

[1] 7You can still use the packagename::functionname() syntax even if you’ve loaded the library. This makes it clear which library the function comes from, and some style guides recommend that you do this for every function you use.

Function conflicts

The order you load your packages is important. If two functions from two different packages you’ve loaded have the same name, the one you loaded last will be used. Sometimes packages will warn you about this - for example, when you load the tidyverse package (which is actually a collection of packages), you’ll see something like this.

R

library(tidyverse)

OUTPUT

── Attaching packages ─────────────────────────────────────── tidyverse 1.3.2 ──

✔ ggplot2 3.4.0 ✔ purrr 1.0.1

✔ tibble 3.1.8 ✔ dplyr 1.0.10

✔ tidyr 1.2.1 ✔ forcats 0.5.2

✔ readr 2.1.3

── Conflicts ────────────────────────────────────────── tidyverse_conflicts() ──

✖ dplyr::filter() masks stats::filter()

✖ dplyr::lag() masks stats::lag()This tells us that the filter() function from dplyr has overwritten (or ‘masked’) the filter() function from the stats package, and there is a similar conflict for lag().

You can still use stats::filter() in your code, but you must explicitly specify that you want to use thestats package by prefixing it with stats::. If you just use filter(), you’ll get the version from dplyr.

Not all packages warn you about conflicts, so be careful you’re using the function that you think you’re using! This can be a source of strange errors, so try adding packagename:: in front of your function calls if you this this might be happening.

There’s no wrong or right answers here, but you might come across people that have (strong) opinions in this area.

Usually, if I’m only using one or two functions from a library, I won’t import the whole library, but just use the packagename::functionname() syntax. If I’m going to be using a lot of functions from a library, I’ll use library().

Some people consider it best practice to always use packagename::functionname(), since then it’s clear which packages are being used and where. This also helps avoid conflicts between functions with the same name in different packages.

However, this style is quite verbose (and a little distracting), and some people might say that this makes code written this way less readable. In practice, I haven’t come across much code that always uses explicit package names.

Vectors

One of the most common data structures R is the vector. These are collections of elements (technically called ‘atomic vectors’) like numbers, which are arranged in a particular order.

Vectors are often created with the concatenation function c():

R

# create a vector of three numbers

a_vector <- c(1,2,3)

# order matters!

a_different_vector <- c(2,1,3)

In R, vectors contain data of only one type. Some of the types you might see are:

- Double: real numbers which can take on any value (like 1.13, 6, -1004.29)

- Integer: whole real numbers (like 1, 2, -1)

- Character: strings of characters, enclosed in either single or double quotes

- Logical: booleans, either

TRUEorFALSE

R

# we can use the seq function to create vectors with numbers in them

double_vec <- seq(1, 10)

# add 'L' to the end of a number to tell R that it's an integer

integer_vec <- seq(1L, 10L)

# strings can be enclosed in single or double quotes

character_vec <- c("this", 'is', "a", 'vector')

# logicals are either TRUE or FALSE

logical_vec <- c(TRUE, FALSE)

You can get at the individual elements of a vector using brackets ([):

R

# first element of double_vec

double_vec[1]

# third to fifth elements of integer_vec

integer_vec[3:5]

#first and fourth elements of character_vec

character_vec[c(1,4)]

Declaring types

In some programming languages, like C and java, the programmer must declare the type of a variable when it’s created. In these statically typed languages, a variable declared as an integer can’t store any other types of data. As a dynamically typed language, R takes a looser approach to variable types.

If you try to create a vector that contains more than one type of element, all the elements will be transformed into the ‘lowest common denominator’ type. For example, concatenating an integer and double will result in a double vector.

R

# concatenating a double and an integer

numeric_vec <- c(5.8, 10L)

# the typeof function tells you the type of a variable

typeof(numeric_vec)

OUTPUT

[1] "double"One way to think about this is that elements are always coerced into the type that results in the least amount of information loss. The integers are a subset of all real numbers, so we can easily represent an integer 10L as the numeric 10. But if we tried to convert the real number 5.8 to an integer, how do we deal with the .8 part?

R

unknown_type <- c(1L, 1, "one")

print(unknown_type)

OUTPUT

[1] "1" "1" "one"R

typeof(unknown_type)

OUTPUT

[1] "character"It’s a character vector.

Character vectors can be used to represent numbers, but numbers can’t easily be used to represent characters.

R also has a way of representing missing data: there’s a special value in every type called NA (not available).

R

vec <- c(1, 3, NA)

Having NA values can change the way that functions interact with your data. For example, how would you take the mean of three values: 1, 2, and NA?

R

nums <- c(1, 2, NA)

mean(nums)

OUTPUT

[1] NAR doesn’t know how to do this, so it just returns NA.

R

mean(nums, na.rm=TRUE)

OUTPUT

[1] 1.5Use the additional named argument na.rm=TRUE

Best practices

Now you know the basics of R, there are a few best practices that you should follow on your journey. These aren’t hard and fast rules, but principles that you should aim to follow to make your code better and more reproducible.

Formatting and readability matters

You should always be able to come back to your code after a long break (months, years) and easily understand what it does.

One thing that helps with this is to follow a style guide like this one for the tidyverse

This covers things like:

- Commenting: do this a lot! It’s better to have more comments than fewer

- Each script should start with a description of what it does or what it’s for

- When you have to name something, use a name that makes sense. You’re more likely to understand what is contained in variable

my_peptidethan you are if it was calledporxorowobljldfibllkmb - Syntax and spacing: use spaces and newlines to make your code more readable, not less

- Line length: try to make horizontal scrolling unnecessary, which usually means lines are less than 80 characters

Don’t copy/paste code

In general, try to avoid copy-pasting blocks of code.

If you find you need to make a change to that code, you’ll need to edit all the copies. If you do this, it’s easy to miss somewhere that you copied it, or make a mistake when you change it.

If you find yourself needing to do the same task many times, it’s usually better to write a function instead.

Fail fast

This is a concept from the world of start-ups, in which it refers to the practice of trying things out at an early stage.

If it works already, great, but if it doesn’t, then you can move on more quickly to something that might work better.

Test your expectations

Try to frequently test your code to see that it does what you expect it to do. Getting into this habit helps guard against bugs.

There are lots of different ways to do this, from just trying out the code with a few different inputs to automated unit testing

Don’t forget also to test that your code doesn’t do things that you don’t expect it to do as well!

R

# define a function

plus_three <- function(num) {

return( num + 3 )

}

# should return 11

plus_three(8)

# should return 2

plus_three(-1)

# should raise an error

plus_three("ten")

You can use the function stopifnot to help you check things automatically.

Project management

Aim to keep your data, scripts and output organized. RStudio helps you with this with Projects, which can be used to store all of the files related to a particular piece of analysis. People write whole papers about this, but here are a few suggestions:

- Treat raw data and metadata as read-only. Put it in a folder called

dataand don’t write anything to that folder except for more raw data - Put source code in a folder called

src - Put files generated during analysis in a folder called

outorresults. It shouldn’t matter if this folder gets deleted, since you should be able to re-create its contents using your data and scripts - A

READMEfile can be useful for a broad overview of the project, and for explaining how to run the analysis - Keep track of the packages required for your analysis using

renv(forRprojects only), orconda(more general but has a few gotchas forRpackages)

That is, an organized project might look something like this:

my_great_project

├── README.md

├── data

│ ├── dataset_1

│ │ ├── dataset_1.R1.fq.gz

│ │ └── dataset_1.R2.fq.gz

│ ├── dataset_2

│ │ ├── dataset_2_1.R1.fq.gz

│ │ ├── dataset_2_1.R2.fq.gz

│ │ ├── dataset_2_2.R1.fq.gz

│ │ └── dataset_2_2.R2.fq.gz

│ └── metadata

│ ├── metadata_1.tsv

│ └── metadata_2.tsv

├── renv

│ └── activate.R

├── results

│ ├── analyse

│ │ └── fold_change.tsv

│ ├── plot

│ │ ├── phylogeny.pdf

│ │ └── taxa.pdf

│ └── preprocess

│ ├── intermediate_result.tsv

│ ├── mapped.bam

│ └── mapped.bam.bai

└── scripts

├── 01_preprocess.R

├── 02_analyse.R

└── 03_plot.RLinks and acknowledgements

As you begin your journey with R, you might find it helpful to refer to one-page summaries (or ‘cheat sheets’) that other people have compiled. For example, there are cheat sheets for:

Here are some other useful links

- R for data science

- fasteR, a course teaching (mainly) base R

- Ten Simple Rules for Reproducible Computational Research

- More information about installing R packages

This lesson is inspired by and draws on some material from Software Carpentries introduction to R, Intro to R and Rstudio for Genomics.

Keypoints

- Use RStudio for writing and executing

Rcode - Add comments to your code by starting a line with

# - Assign values to variables using the assignment operator

<- - Functions covert inputs to outputs

- Vectors are a collection of values of the same type

- Following best practices will help the with the correctness and readability of your

Rcode

Content from Reading in data

Last updated on 2023-01-24 | Edit this page

Overview

Questions

- How can we get data into

R?

Objectives

- Understand the advantages of tidy data

- Use

readxlto import data from Microsoft Excel - Use

readrto import data from text files

Do I need to do this lesson?

If you’ve already used readr and readxl and here, you’ll probably already know the material covered in this section. If you can complete the following excercises, you can skip this lesson.

Codon table

Load in the data in human_codon_table.xlsx (the column names are codon, frequency and count).

Make sure to specify the column types and names. Make use of here::here() to generate the file path relative to the project root.

Weather data

Load the data in the two files weather_brisbane.csv and weather_sydney.csv into one data frame, creating a column called ‘file’ that contains the file from which each row originated.

Make sure to specify the column types and names. Make use of here::here() to generate the file path relative to the project root.

Loading the file human_codon_table.xlsx:

R

# this might be different depending on where you saved the data

cdn_path <- here::here("episodes", "data", "human_codon_table.xlsx")

df <- readxl::read_xlsx(cdn_path,

col_names=c("codon", "frequency", "count"),

col_types = c('text', "numeric", "numeric"))

glimpse(df)

ERROR

Error in glimpse(df): could not find function "glimpse"And the weather data:

R

library(readr)

wthr_path <- here::here("episodes", "data", c("weather_brisbane.csv", "weather_sydney.csv"))

# column types

col_types <- list(

date = col_date(format="%Y-%m-%d"),

min_temp_c = col_double(),

max_temp_c = col_double(),

rainfall_mm = col_double(),

evaporation_mm = col_double(),

sunshine_hours = col_double(),

dir_max_wind_gust = col_character(),

speed_max_wind_gust_kph = col_double(),

time_max_wind_gust = col_time(),

temp_9am_c = col_double(),

rel_humid_9am_pc = col_integer(),

cloud_amount_9am_oktas = col_double(),

wind_direction_9am = col_character(),

wind_speed_9am_kph = col_double(),

MSL_pressure_9am_hPa = col_double(),

temp_3pm_c = col_double(),

rel_humid_3pm_pc = col_double(),

cloud_amount_3pm_oktas = col_double(),

wind_direction_3pm = col_character(),

wind_speed_3pm_kph = col_double(),

MSL_pressure_3pm_hPa = col_double()

)

# read in data

weather <- read_csv(wthr_path, skip=10,

col_types=col_types, col_names = names(col_types))

glimpse(weather)

ERROR

Error in glimpse(weather): could not find function "glimpse"Reading in data

So you want to make some awesome plots using R. The first step you’ll need to take is to import your data.

When I first learnt R in a first-year statistics course, the way we did this was:

- Open an Excel spreadsheet with the data

- Select the data to be imported

- Copy to the clipboard (Cmd + c)

- Open an R terminal

- Enter the command

read.table(pipe("pbpaste"), header = TRUE)

This is a pretty convoluted way to do things! This weird process was part of the reason that, after I finished the course, I didn’t touch R again for almost ten years.

Luckily, in the meantime people worked out better ways of doing things. This lesson will cover two awesome packages which can help you import your data: readxl for Excel files, and readr for text files.

Reading in data from Excel: readxl

If you’ve entered data into a digital format, chances are that you’ve used spreadsheet software like Microsoft Excel. Excel makes data entry easy because it takes care of all of the formatting for you. But at some point, you’ll probably want to get your data out of Excel and into R. That’s where the readxl package comes in!

The workhorse of this package is the read_xlsx() function (or read_xls() for older excel files). It takes as input a path to the file you want to read.

R

# define path to excel file to read - this will probably be different for you

my_excel_sheet <- here::here("episodes", "data", "readxl_example_1.xlsx")

# read in data

my_excel_data <- readxl::read_xlsx(my_excel_sheet)

my_excel_data

OUTPUT

# A tibble: 3 × 3

word count number

<chr> <chr> <dbl>

1 prematurely one 4

2 airconditioned two 5

3 supermarket three 6File paths with here::here()

Note that I use a function called here to create the file path to the excel file. You can read more about this function here.

Using this function helps with cross-platform compatibility (so it works on Windows as well as MacOSX and Linux). This is because directories on Windows are usually separated with a backslash \, whereas on Unix-based distributions (like MacOS and Linux) directories are separated by a slash /.

You can check what the file path looks like on your platform by printing out the variable we defined with the file path:

R

my_excel_sheet

OUTPUT

[1] "/home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/data/readxl_example_1.xlsx"Using here::here() always generates paths relative to the project root (the file created by RStudio when you make a new project, which has the file extension .Rproj). If you move the project around on your filesystem (or send it to someone else), the import will still work. This wouldn’t be the case if you manually specified the absolute path to the file.

Note that this example file path is a little convoluted, because of the way the lessons are created. Yours should be more straightforward and look more like this:

R

my_excel_sheet <- here::here("data", "readxl_example_1.xlsx")

Tibbles

Coming back to the output of the call to readxl::read_xlsx() - calls to this function return a tibble object with the data contained in the excel spreadsheet.

The tibble object is a convenient way of storing rectangular data, where column has a type, (which is shown when you print the table). Ideally, in a tibble each column should contain data from one variable, and each row consists of observations of each variables - this is known as ‘tidy data’ (more on this in the next lesson).

If you don’t specify the types (like character, integer, double) of each column, readxl will try to guess them based on the types in the excel spreadsheet. This can lead to unexpected behaviour, especially if you mix numbers and strings in the same columns.

Check the documentation to read more about how to specify column types in readxl.

R

# read in data

data <- readxl::read_xlsx(here::here("episodes", "data", "readxl_example_2.xlsx"))

head(data)

OUTPUT

# A tibble: 3 × 5

replicate `drug concentration` `assay 1` `assay 2` `assay 3`

<dbl> <chr> <chr> <chr> <lgl>

1 1 10 uM ++ 1 TRUE

2 2 20 uM +++ medium FALSE

3 2 20 uM + high FALSE Note that:

- The ‘replicate’ column has a type of

dbleven though it contains only integers. - The ‘drug concentration’ column is of type

chrbecause R doesn’t know that ‘uM’ is a unit. - Mixing numbers and characters in the ‘assay 2’ column results in a column of characters (why do you think this is?)

The type of the columns is important to be aware of because some functions in R expect that you feed them columns of a particular type. For example, we can extract the ‘drug concentration’ column from the second file we imported using the $ operator.

R

conc <- data$`drug concentration`

conc

OUTPUT

[1] "10 uM" "20 uM" "20 uM"We can then extract the first two characters of each string in this column using stringr::str_sub():

R

nums <- stringr::str_sub(conc, 1, 2)

But we can’t add one to this column:

R

conc + 1

ERROR

Error in conc + 1: non-numeric argument to binary operatorSpecifying column types

Since the guessing of types can result in unexpected behaviour, it’s best to always specify the types of data when you import it. You can do this in a verbose way:

R

data <- readxl::read_xlsx(here::here("episodes", "data", "readxl_example_2.xlsx"),

col_types = c("numeric", "text", "text", "text", "numeric"))

WARNING

Warning: Coercing boolean to numeric in E2 / R2C5WARNING

Warning: Coercing boolean to numeric in E3 / R3C5WARNING

Warning: Coercing boolean to numeric in E4 / R4C5R

data

OUTPUT

# A tibble: 3 × 5

replicate `drug concentration` `assay 1` `assay 2` `assay 3`

<dbl> <chr> <chr> <chr> <dbl>

1 1 10 uM ++ 1 1

2 2 20 uM +++ medium 0

3 2 20 uM + high 0Note that the last column (‘assay 3’) contains Boolean (TRUE/FALSE) values, but we coerced it to a numeric type when we imported it. R prints a warning to let us know.

Other options

readxl::read_xlsx() also has lots of other options to deal with other aspects of data imports - for example, you can:

- Use the

sheetandrangenamed arguments to specify which parts of the sheet to import - Skip a number of header rows using the

skipoption, - Provide a vector of your own column names using the

col_namesargument - Pass

col_names=FALSEto avoid using the first line of the file as your column names if your data doesn’t have column names

Challenge 2: readxl

Open the file human_codon_table.xlsx in Excel. The columns of this table contain codons, frequencies of observations of each codon, and counts of each codon.

How do you think it should be imported?

Import this data, including the col_names, col_types, sheet and range named arguments.

R

readxl::read_xlsx(here::here("episodes", "data", "human_codon_table.xlsx"),

col_names = c("codon", "frequency", "count"),

col_types = c('text', 'numeric', 'numeric'),

sheet = 'human_codon_table',

range = 'A2:C65' )

OUTPUT

# A tibble: 64 × 3

codon frequency count

<chr> <dbl> <dbl>

1 UCU 15.2 618711

2 UAU 12.2 495699

3 UGU 10.6 430311

4 UUC 20.3 824692

5 UCC 17.7 718892

6 UAC 15.3 622407

7 UGC 12.6 513028

8 UUA 7.7 311881

9 UCA 12.2 496448

10 UAA 1 40285

# … with 54 more rowsReading in data from text files: readr

Alternatively, you might have tabular data in a text file. In these kinds of files, each row of the file is a row of the table, and usually either tabs or commas separate the values in each column.

If you have comma-separated data (like a .csv file) the readr::read_csv() function will be most convenient, and if your data is tab-separated (like a .tsv file), you’ll want readr::read_tsv(). If you have a different delimiter, you can use readr::read_delim(file, delim=delimiter), where delimiter is a string containing your delimiter.

The syntax is very similar to read_xlsx(), and you should specify the column types here as well. Here I use the short form of the column specification - ‘c’ for character and ‘i’ for integer.

R

data <- readr::read_csv(here::here("episodes", "data", "readr_example_1.csv"),

col_types = c("cci"))

data

OUTPUT

# A tibble: 3 × 3

word count number

<chr> <chr> <int>

1 prematurely one 4

2 airconditioned two 5

3 supermarket three 6You can read more about the column specification for readr functions here.

The other options for read_csv() are also similar to those for read_xslx, including col_names and skip.

Reading multiple files

If you have multiple files with the same columns and column types, you can pass a vector of file paths rather than an atomic vector. To add an extra column with the file name (so you know which rows came from which files), use the id parameter.

R

files <- c(here::here("episodes", "data", "sim_0_counts.txt"),

here::here("episodes", "data", "sim_1_counts.txt"))

data <- readr::read_tsv(files, col_types = 'cci', id="file")

head(data)

OUTPUT

# A tibble: 6 × 4

file test0 test1 count

<chr> <chr> <chr> <int>

1 /home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/… test… PRR 2

2 /home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/… test… AVSM 1

3 /home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/… test… IGLF 1

4 /home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/… test… no_i… 1039

5 /home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/… test… R 82

6 /home/runner/work/cmri_R_workshop/cmri_R_workshop/episodes/… test… DI 3Resources

Content from Tidy data

Last updated on 2023-01-24 | Edit this page

Overview

Questions

- What is tidy data?

- How can we pivot data frames to make them tidy?

Objectives

- Understand the principles of tidy data

- Use

pivot_longer()andpivot_wider()to create longer or wider data frames

Check Challenge 1 for the solution!

Tidy data

When recording observations, we don’t often give that much thought to how we record the data. Usually we enter data into an excel spreadsheet and care most about entering the data in the easiest way. However, this often results in ‘messy’ data that requires a little bit of massaging before analysis.

There are many possible ways to structure a dataset. For example, if we conducted an experiment where we made two sequencing libraries, in which we have counted the instances of six different barcodes, we could structure the data like this:

OUTPUT

# A tibble: 2 × 7

library b1 b2 b3 b4 b5 b6

<chr> <int> <int> <int> <int> <int> <int>

1 lib1 384 796 109 404 106 812

2 lib2 102 437 391 782 101 789In this table, the counts for each barcode are stored in a separate column. The ‘library’ column tells us which library the counts on each row are from.

Conversely, we could keep the counts for each library in a separate column, and the rows can tell us which barcode is being counted:

OUTPUT

# A tibble: 6 × 3

barcode lib1 lib2

<chr> <int> <int>

1 b1 384 102

2 b2 796 437

3 b3 109 391

4 b4 404 782

5 b5 106 101

6 b6 812 789We could even structure the table like this:

OUTPUT

# A tibble: 2 × 13

library `384` `796` `109` `404` `106` `812` `102` `437` `391` `782` `101`

<chr> <chr> <chr> <chr> <chr> <chr> <chr> <chr> <chr> <chr> <chr> <chr>

1 lib1 b1 b2 b3 b4 b5 b6 <NA> <NA> <NA> <NA> <NA>

2 lib2 <NA> <NA> <NA> <NA> <NA> <NA> b1 b2 b3 b4 b5

# … with 1 more variable: `789` <chr>This is one of the least intuitive ways to structure the data - the columns are the counts (except for the library column), and the rows tell us which barcode had which count.

These are all examples of messy ways to structure the data. There are usually very many ways to make the data messy, but only one ‘Tidy’ format. Being tidy means a dataset has:

- Each variable forms a column.

- Each observation forms a row.

- Each type of observational unit forms a table.

Here, variables record all the values that measure the same attribute (for example library, sample, count), and an observation contains all the measurements on a single unit (like a sequencing run, or a single experiment). Each observational unit (like independent experiments with different variables) should get its own table. This just means that if we ran another experiment with different variables, it should go in a different table.

In our this case, the tidy version looks like this:

R

table1

OUTPUT

# A tibble: 12 × 3

library barcode count

<chr> <chr> <int>

1 lib1 b1 384

2 lib1 b2 796

3 lib1 b3 109

4 lib1 b4 404

5 lib1 b5 106

6 lib1 b6 812

7 lib2 b1 102

8 lib2 b2 437

9 lib2 b3 391

10 lib2 b4 782

11 lib2 b5 101

12 lib2 b6 789This is tidy because each column represents a variable (library, barcode and count), each row is an observation (count for a given library and barcode), and we have all the data from this experiment in the one table.

tidyr for tidying data

So if tidy data is the goal, how do we get there? One way is to go back and edit the original file manually. But this is problematic for several reasons:

- You should treat your original data as read-only, and never change it

- Manual edits are not reproducible

- Manual edits can be time-consuming

Instead, we can use tools from the tidyr and dplyr packages for tidying and manipulating tables of data.

Pivoting

The variants on the tables that we saw above are all generated by pivoting the tidy (or ‘long’) version to untidy (in this case, ‘wide’) versions. For example, looking at table1b:

R

table1b

OUTPUT

# A tibble: 6 × 3

barcode lib1 lib2

<chr> <int> <int>

1 b1 384 102

2 b2 796 437

3 b3 109 391

4 b4 404 782

5 b5 106 101

6 b6 812 789Another way to think about tidiness is if the names of the column can reflect the data contained in them. It’s a bit misleading to call the columns lib1 and lib2, they actually store counts (and not some other property of the library, such as the barcodes it contains).

We can see that if we were to restructure the lib1 and lib2 columns into two different columns, one that tell us from which library the count came, and one that contains the count value, the dataset would be tidy.

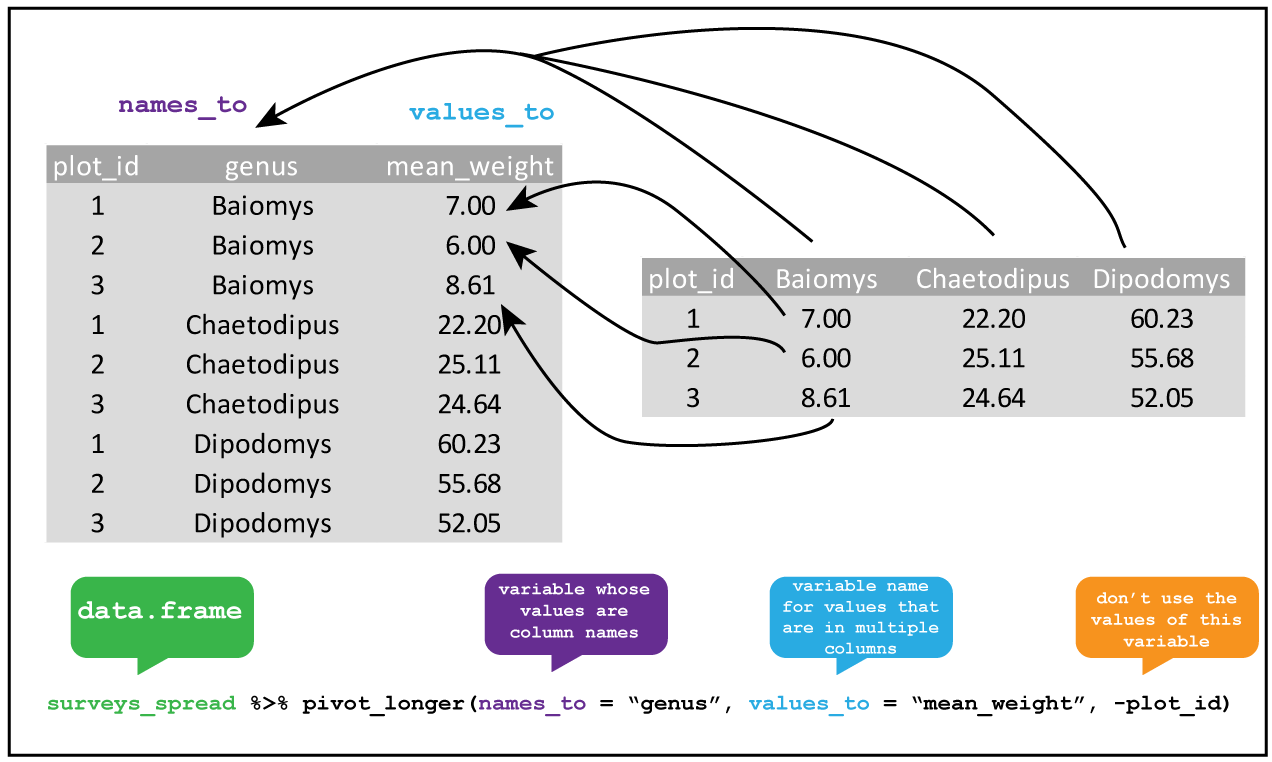

Consolidating columns with pivot_longer()

Knowing this, we can tidy the dataset up using pivot_longer().

R

library(tidyverse)

table1b %>%

pivot_longer(lib1:lib2, names_to="library", values_to="count")

OUTPUT

# A tibble: 12 × 3

barcode library count

<chr> <chr> <int>

1 b1 lib1 384

2 b1 lib2 102

3 b2 lib1 796

4 b2 lib2 437

5 b3 lib1 109

6 b3 lib2 391

7 b4 lib1 404

8 b4 lib2 782

9 b5 lib1 106

10 b5 lib2 101

11 b6 lib1 812

12 b6 lib2 789We can tell that this data is tidy because the column names accurately reflect the data they contain: the count column stores counts, the library column tells us which library the counts came from, and the barcode column tells us which barcode we’re measuring.

Data pipelines with pipes (%>%)

In the code block above, we saw a strange collection of symbols (%>%) after the variable that contained our data. What does it do?

This construct is called the pipe, and comes from the magrittr package (which is part of the tidyverse). You can either type out the three characters seperatly, or use the shortcut Ctrl + Shift + m to insert a pipe.

Let’s consider an example. Say that we have a vector called counts that represents the counts for several vectors in a selection experiment, and we wanted to identify the unique counts, take their log, and then take the mean.

One way to do this is to call each function sequentially, assigning each to an intermediate variable:

R

# generate binomial data to play with

counts <- rbinom(10000, 50, 0.5)

unique_counts <- unique(counts)

log_counts <- log(unique_counts, 10)

mean_log_counts <- mean(log_counts)

This is a bit inefficient because we’ve had to type out the same variable name twice. I often find that when I do things this way, when I change one of the steps but forget to change both the variable names, and end up with weird bugs.

An alternative is to nest all the function calls inside each other:

R

mean_log_counts <- mean(log(unique(counts), 10))

Hopefully you can see why this isn’t a good idea - nested function calls can get very messy, very quickly, especially if the functions have multiple arguments (like log()).

A third way is using the pipe %>% operator, which feeds the output of each function into the next:

R

mean_log_counts <- counts %>%

unique() %>%

log(10) %>%

mean()

This version of the code is cleaner, and the top-to-bottom flow makes the order the functions are applied in more obvious. This way of doing things is very popular, and you’ll see it a lot (for example, in code sample on stack overflow).

There are a few complications to using the pipe which you can read more about here. One that’s worth being aware of is that using lots of pipes can result in long pipelines, and if something goes wrong somewhere in the middle it can be hard to track down exactly where the issue is. In this case, you can use a few intermediate variables to try to figure out which step is going wrong.

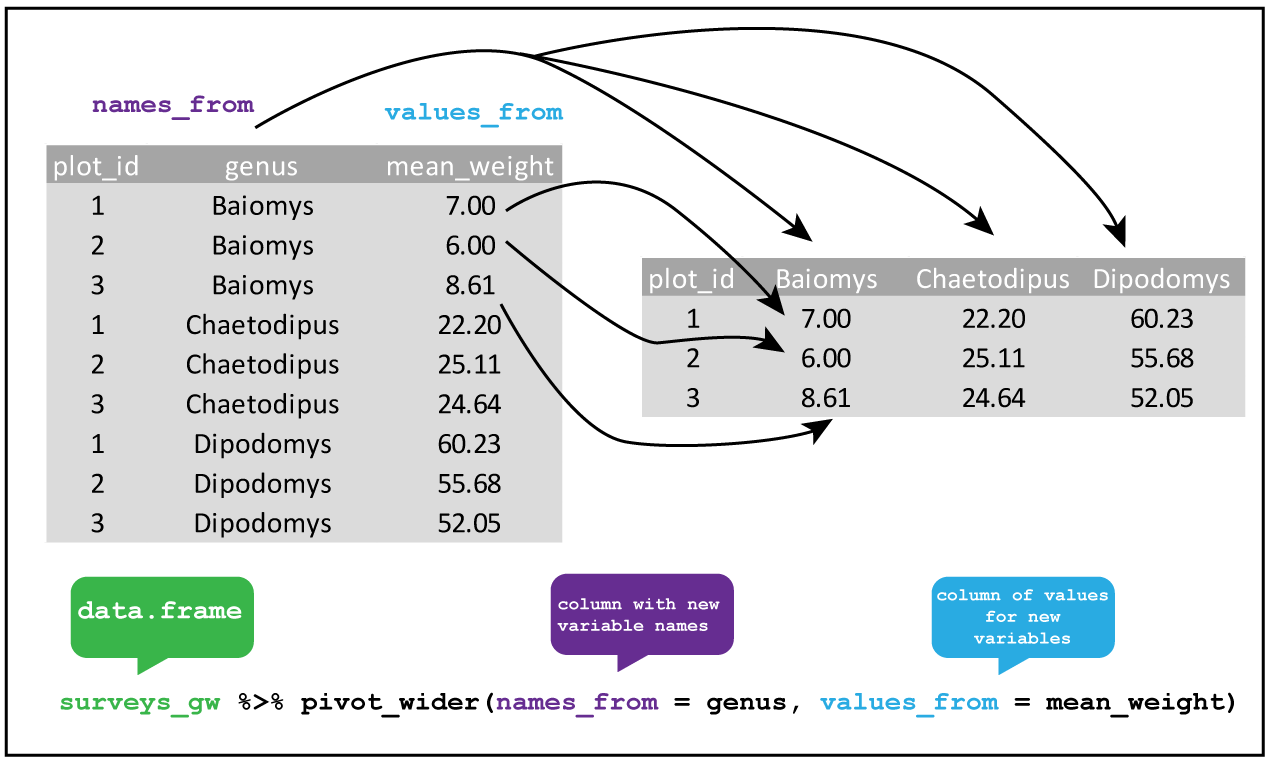

Creating more columns with pivot_wider()

We can also do the reverse operation using pivot_wider() (this was how I generated the untidy versions of the tables). But haven’t we just been saying that we should always aspire to keep our data tidy? So why would we ever want to do this?

When working with data in R, the long format does tend to be the most useful, because this is the format that most functions expect data to be in. However, if you want to display the data for a person (rather than for a function in code), the wide format can sometimes be useful for making comparisons. For example, we can easily compare the counts for each barcode in the two libraries in table1b, but the comparison is easy for a person to make when the table is in a long form.

R

table1

OUTPUT

# A tibble: 12 × 3

library barcode count

<chr> <chr> <int>

1 lib1 b1 384

2 lib1 b2 796

3 lib1 b3 109

4 lib1 b4 404

5 lib1 b5 106

6 lib1 b6 812

7 lib2 b1 102

8 lib2 b2 437

9 lib2 b3 391

10 lib2 b4 782

11 lib2 b5 101

12 lib2 b6 789

We can pivot our long table to a wide one using pivot_longer().

R

table1 %>%

pivot_wider(names_from = "library", values_from = "count")

OUTPUT

# A tibble: 6 × 3

barcode lib1 lib2

<chr> <int> <int>

1 b1 384 102

2 b2 796 437

3 b3 109 391

4 b4 404 782

5 b5 106 101

6 b6 812 789Other tidyr functions

Tidyr also has a number of other useful functions for tidying up datasets, which include: - Combining columns where multiples pieces of information are spread out (unite()), or spreading out columns which contain data that should be in separate column (separate()) - Adding extra rows to create all possible combinations of the values in multiple columns (expand() and complete) - Handling missing values, for example replacing NA values with something else (replace_na()) or dropping rows that contain NA (drop_na()) - Nesting data within list-columns (nest()), and unnesting such columns (unnest())

While useful, I tend to use these less frequently compared to the pivoting functions, so I leave these to you to explore on your own. You can get an overview of these functions on the tidyr cheatsheet, and in the tidyr documentation.

R

fp <- here::here("episodes", "data", "untidy_data.xlsx")

df <- readxl::read_xlsx(fp, col_names = c("batch_1", "batch_2"),

col_types = c("numeric", "numeric"))

ERROR

Error: `path` does not exist: '/home/runner/work/cmri_R_workshop/cmri_R_workshop/site/built/episodes/data/untidy_data.xlsx'R

df %>%

# we don't know what the values represent, so just call the column 'value'

pivot_longer(contains("batch"), names_to = "batch", values_to = "value") %>%

# drop rows that have an NA value

drop_na(value)

ERROR

Error in UseMethod("pivot_longer"): no applicable method for 'pivot_longer' applied to an object of class "function"Resources and acknowledgments

- tidyr cheatsheet

- Tidy data vignette

- Tidy data paper

- I’ve borrowed figures (and inspiration) from the excellent coding togetheR course material

Content from Data wrangling

Last updated on 2023-01-24 | Edit this page

Overview

Questions

- What are the main

dplyrverbs? - How can I analyse data within groups?

Objectives

- Know how to use the main

dplyrverbs - Use

group_by()to aggregate and manipulate within groups

Do I need to do this lesson?

If you already have experience with dplyr, you can skip this lesson if you can answer all the following questions.

- Load in the weather data from the

readrandreadxl‘do I need to do this lesson’ challenge. In the process, create a column calledfilethat contains the filename for each row. - Create a column called

citywith the name of the city (‘brisbane’, or ‘sydney’). - What is the median minimum (

min_temp_c) and maximum (max_temp_c) temperature in the observations for each city? - Count the number of days when there were more than 10 hours of sunshine (

sunshine_hours) in each city. - A cold cloudy day is one where there were fewer than 10 hours of sunshine, and the maximum temperature was less than 15 degrees. A hot sunny day is one where there were more than 10 hours of sunshine, and the maximum temperature was more than than 25 degrees. Calculate the the mean relative humidity at 9am (

rel_humid_9am_pc) and 3pm (rel_humid_3pm_pc) on days that were hot and sunny, cold and cloudy, or neither. - What is the mean maximum temperature on the 5 hottest days in each city?

- Add a column ranking the days by minimum temperature for each city, where the coldest day for each is rank 1, the next coldest is rank 2, etc.

- Generate a forecast for each city using the code below. If a cloudy day is one where there are 10 or fewer hours of sunshine, on how many days was the forecast accurate?

R

library(lubridate)

OUTPUT

Loading required package: timechangeOUTPUT

Attaching package: 'lubridate'OUTPUT

The following objects are masked from 'package:base':

date, intersect, setdiff, unionR

# generate days

days <- seq(ymd('2022-11-01'),ymd('2022-11-29'), by = '1 day')

# forecast is the same in each city - imagine it's a country-wide forecast

forecast <- tibble(

date = rep(days)

) %>%

# toss a coin

mutate(forecast = sample(c("cloudy", "sunny"), size = n(), replace=TRUE))

ERROR

Error in tibble(date = rep(days)) %>% mutate(forecast = sample(c("cloudy", : could not find function "%>%"R

# 1. Load in the weather data from the `readr` and `readxl` 'do I need to do this lesson' challenge

library(tidyverse)

OUTPUT

── Attaching packages ─────────────────────────────────────── tidyverse 1.3.2 ──

✔ ggplot2 3.4.0 ✔ purrr 1.0.1

✔ tibble 3.1.8 ✔ dplyr 1.0.10

✔ tidyr 1.2.1 ✔ stringr 1.4.1

✔ readr 2.1.3 ✔ forcats 0.5.2

── Conflicts ────────────────────────────────────────── tidyverse_conflicts() ──

✖ lubridate::as.difftime() masks base::as.difftime()

✖ lubridate::date() masks base::date()

✖ dplyr::filter() masks stats::filter()

✖ lubridate::intersect() masks base::intersect()

✖ dplyr::lag() masks stats::lag()

✖ lubridate::setdiff() masks base::setdiff()

✖ lubridate::union() masks base::union()R

wthr_path <- here::here("episodes", "data", c("weather_brisbane.csv", "weather_sydney.csv"))

# column types

col_types <- list(

date = col_date(format="%Y-%m-%d"),

min_temp_c = col_double(),

max_temp_c = col_double(),

rainfall_mm = col_double(),

evaporation_mm = col_double(),

sunshine_hours = col_double(),

dir_max_wind_gust = col_character(),

speed_max_wind_gust_kph = col_double(),

time_max_wind_gust = col_time(),

temp_9am_c = col_double(),

rel_humid_9am_pc = col_integer(),

cloud_amount_9am_oktas = col_double(),

wind_direction_9am = col_character(),

wind_speed_9am_kph = col_double(),

MSL_pressure_9am_hPa = col_double(),

temp_3pm_c = col_double(),

rel_humid_3pm_pc = col_double(),

cloud_amount_3pm_oktas = col_double(),

wind_direction_3pm = col_character(),

wind_speed_3pm_kph = col_double(),

MSL_pressure_3pm_hPa = col_double()

)

# read in data

weather <- read_csv(wthr_path, skip=10,

col_types=col_types, col_names = names(col_types),

id = "file"

)

ERROR

Error: '/home/runner/work/cmri_R_workshop/cmri_R_workshop/site/built/episodes/data/weather_brisbane.csv' does not exist.R

glimpse(weather)

ERROR

Error in glimpse(weather): object 'weather' not foundNothing much new here if you already did the readr episode.

R

# 2. Create a column with the name of the city ('brisbane', or 'sydney').

weather <- weather %>%

mutate(city = stringr::str_extract(file, "brisbane|sydney"))

ERROR

Error in mutate(., city = stringr::str_extract(file, "brisbane|sydney")): object 'weather' not foundR

# 3. What is the median minimum (`min_temp_c`) and maximum (`max_temp_c`)

# temperature in the observations for each city?

weather %>%

group_by(city) %>%

summarise(median_min_temp = median(min_temp_c),

median_max_temp = median(max_temp_c, na.rm=TRUE))

ERROR

Error in group_by(., city): object 'weather' not foundWe need na.rm=TRUE for the maximum column because this column contains NA values

R

#4. Count the number of days when there were more than 10 hours of sunshine

# (`sunshine_hours`) in each city.

weather %>%

mutate(sunny = sunshine_hours > 10) %>%

count(sunny, city)

ERROR

Error in mutate(., sunny = sunshine_hours > 10): object 'weather' not foundHere I did count() as a shortcut for group_by() and summarise(), but the long way works too.

R

#5. A cold cloudy day is one where there were fewer than 10 hours of sunshine,

# and the maximum temperature was less than 15 degrees.

# A hot sunny day is one where there were more than 10 hours of sunshine,

# and the maximum temperature was more than than 25 degrees.

# Calculate the the mean relative humidity at 9am (`rel_humid_9am_pc`) and

# 3pm (`rel_humid_3pm_pc`) on days that were hot and sunny, cold and cloudy, or neither.

weather %>%

mutate(day = case_when(

sunshine_hours > 10 & max_temp_c > 25 ~ "hot_sunny",

sunshine_hours <= 10 & max_temp_c < 15 ~ "cold_cloudy",

TRUE ~ "neither"

)) %>%

group_by(day) %>%

summarise(mean_humid_9am = mean(rel_humid_9am_pc),

mean_humid_3pm = mean(rel_humid_3pm_pc, na.rm=TRUE))

ERROR

Error in mutate(., day = case_when(sunshine_hours > 10 & max_temp_c > : object 'weather' not foundThere were no cold cloudy days in this dataset.

R

# 6. What is the mean maximum temperature on the 5 hottest days in each city?

weather %>%

group_by(city) %>%

slice_max(order_by = max_temp_c, n = 5, with_ties=FALSE) %>%

summarise(mean_max_temp = mean(max_temp_c, na.rm=TRUE))

ERROR

Error in group_by(., city): object 'weather' not foundHere I use slice_max(), but there are more complicated ways to solve this using other dplyr verbs.

R

#7. Add a column ranking the days by minimum temperature for each city,

# where the coldest day for each is rank 1, the next coldest is rank 2, etc.

weather %>%

group_by(city) %>%

arrange(min_temp_c) %>%

mutate(rank = row_number())

ERROR

Error in group_by(., city): object 'weather' not foundI tend to use the combination of arrange(), mutate() and row_number() for adding ranks, but there are probably other ways of achieving the same end.

R

# 8. If a cloudy day is one where there are 10 or fewer hours of sunshine,

# on how many days was the forecast accurate?

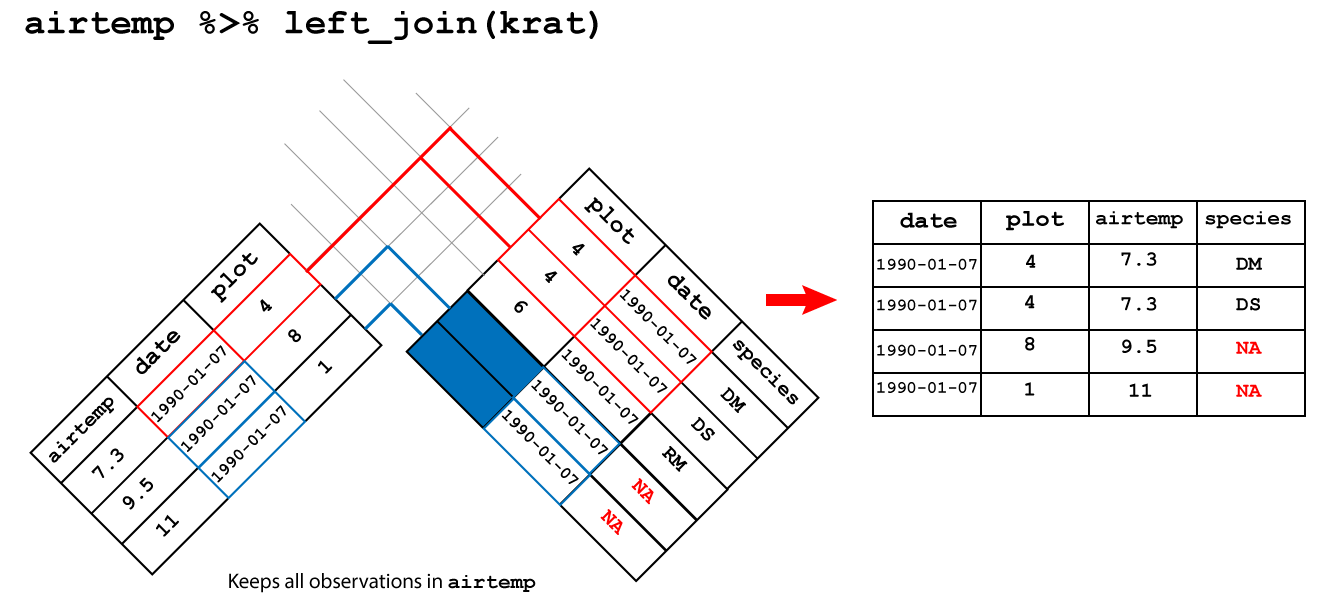

weather %>%

left_join(forecast, by="date") %>%

mutate(cloudy_or_sunny = ifelse(sunshine_hours > 10, "sunny", "cloudy")) %>%

mutate(forecast_accurate = forecast == cloudy_or_sunny) %>%

count(forecast_accurate)

ERROR

Error in left_join(., forecast, by = "date"): object 'weather' not foundSee challenge 7 for an alternative way of solving this problem.

Manipulating data with dplyr

So once we have our data in a tidy format, what do we do with it? For analysis, I often turn to the dplyr package, which contains several useful functions for manipulating tables of data.

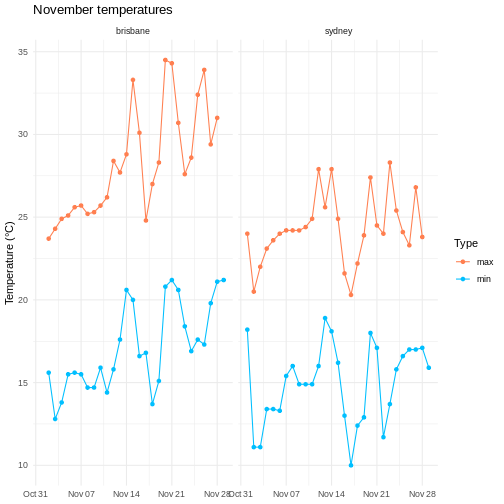

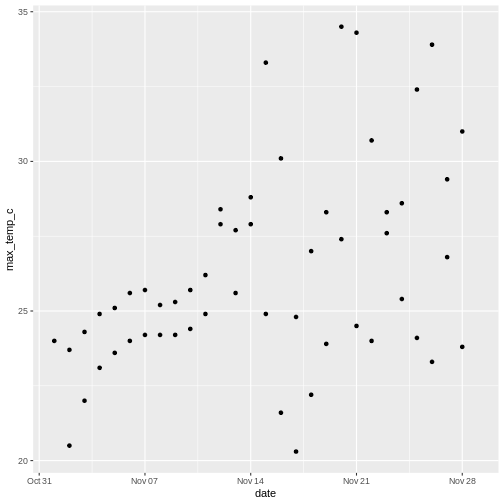

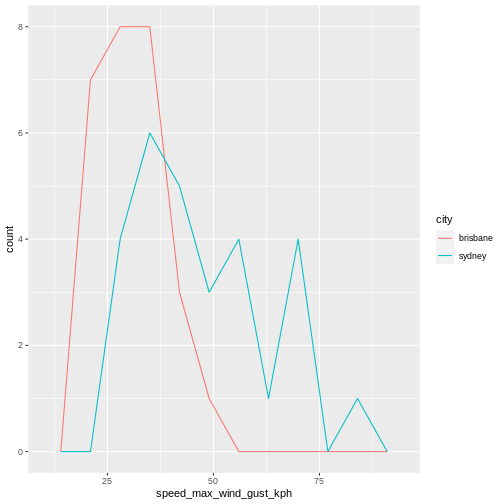

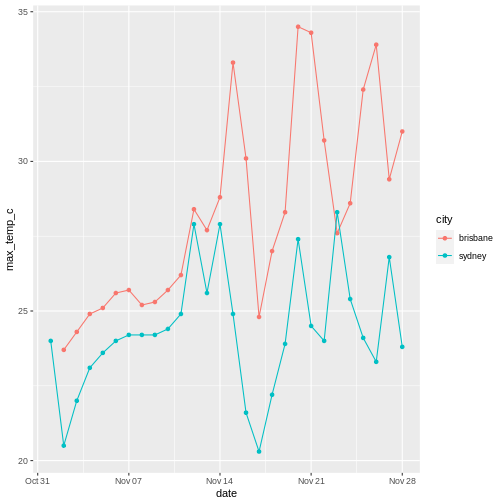

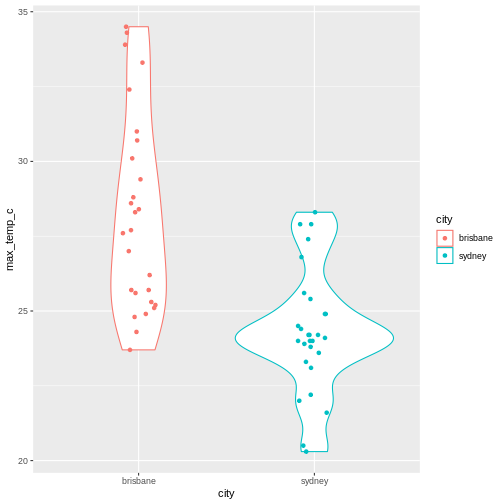

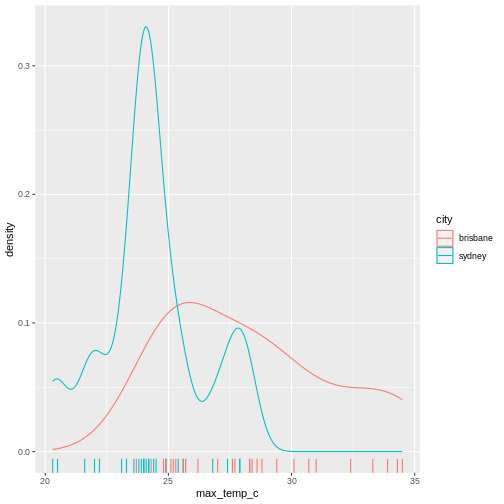

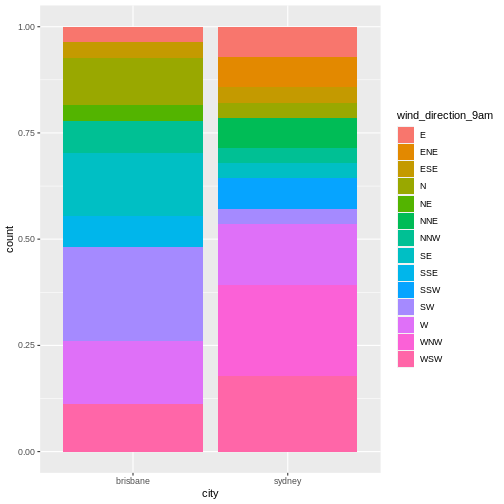

To illustrate the functions of this package, we’ll use a dataset of weather observations in Brisbane and Sydney from the Bureau of Meterology.

These files are called weather_brisbane.csv and weather_sydney.csv.

First, we load both files using readr:

R

# load tidyverse

library(tidyverse)

# data files

# data files - readr can also read data from the internet

data_dir <- "https://raw.githubusercontent.com/szsctt/cmri_R_workshop/main/episodes/data/"

data_files <- file.path(data_dir, c("weather_sydney.csv", "weather_brisbane.csv"))

# column types

col_types <- list(

date = col_date(format="%Y-%m-%d"),

min_temp_c = col_double(),

max_temp_c = col_double(),

rainfall_mm = col_double(),

evaporation_mm = col_double(),

sunshine_hours = col_double(),

dir_max_wind_gust = col_character(),

speed_max_wind_gust_kph = col_double(),

time_max_wind_gust = col_time(),

temp_9am_c = col_double(),

rel_humid_9am_pc = col_integer(),

cloud_amount_9am_oktas = col_double(),

wind_direction_9am = col_character(),

wind_speed_9am_kph = col_double(),

MSL_pressure_9am_hPa = col_double(),

temp_3pm_c = col_double(),

rel_humid_3pm_pc = col_double(),

cloud_amount_3pm_oktas = col_double(),

wind_direction_3pm = col_character(),

wind_speed_3pm_kph = col_double(),

MSL_pressure_3pm_hPa = col_double()

)

# read in data

weather <- readr::read_csv(data_files, skip=10,

col_types=col_types, col_names = names(col_types),

id="file")

Creating summaries with summarise()

First, we would like to know what the mean minimum and maximum temperatures were overall. For this, we can use summarise():

R

weather %>%

summarise(mean_min_temp = mean(min_temp_c),

mean_max_temp = mean(max_temp_c))

OUTPUT

# A tibble: 1 × 2

mean_min_temp mean_max_temp

<dbl> <dbl>

1 16.0 NANotice that the mean_max_temp is NA, because we had some NA values in this column. In R we use NA for missing values. So how does one take the mean of some numbers, some of which are missing? We can’t so the answer is also a missing value. We can, however, tell the mean() function to ignore the missing values using na.rm=TRUE:

R

weather %>%

summarise(mean_min_temp = mean(min_temp_c),

mean_max_temp = mean(max_temp_c, na.rm=TRUE))

OUTPUT

# A tibble: 1 × 2

mean_min_temp mean_max_temp

<dbl> <dbl>

1 16.0 26.2R

weather %>%

summarise(median_min_temp = median(min_temp_c),

median_max_temp = median(max_temp_c, na.rm=TRUE))

OUTPUT

# A tibble: 1 × 2

median_min_temp median_max_temp

<dbl> <dbl>

1 15.9 25.3dplyr also has some special functions that are designed to be used inside of other functions. For example, if we want to know how many observations there were of the minimum and maximum temperatures, we could use n(). Or if we wanted to know how many different directions the maximum wind gust had, we can use n_distinct().

R

weather %>%

summarise(n_days_observed = n(),

n_wind_dir = n_distinct(dir_max_wind_gust))

OUTPUT

# A tibble: 1 × 2

n_days_observed n_wind_dir

<int> <int>

1 57 15Grouped summaries with group_by()

However, all of these summaries have combined the observations for Sydney and Brisbane. It probably makes sense to group the observations by city, and then compute the summary statistics. For this, we can use group_by().

If you use group_by() on a tibble, it doesn’t actually look like it changes that much.

R

weather %>%

group_by(file)

OUTPUT

# A tibble: 57 × 22

# Groups: file [2]

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-01 18.2 24 0.2 4.6 9.5 WNW 69

2 https://r… 2022-11-02 11.1 20.5 0.6 13 12.8 W 67

3 https://r… 2022-11-03 11.1 22 0 7.8 8.9 W 56

4 https://r… 2022-11-04 13.4 23.1 1 6 5.7 SSE 26

5 https://r… 2022-11-05 13.4 23.6 0.2 4.4 11.8 ENE 37

6 https://r… 2022-11-06 13.3 24 0 4 12.1 ENE 39

7 https://r… 2022-11-07 15.4 24.2 0 9.8 12.3 NE 41

8 https://r… 2022-11-08 16 24.2 1.2 8 11 ENE 35

9 https://r… 2022-11-09 14.9 24.2 0.2 8 10.3 E 33

10 https://r… 2022-11-10 14.9 24.4 0 7.8 9.3 ENE 43

# … with 47 more rows, 13 more variables: time_max_wind_gust <time>,

# temp_9am_c <dbl>, rel_humid_9am_pc <int>, cloud_amount_9am_oktas <dbl>,

# wind_direction_9am <chr>, wind_speed_9am_kph <dbl>,

# MSL_pressure_9am_hPa <dbl>, temp_3pm_c <dbl>, rel_humid_3pm_pc <dbl>,

# cloud_amount_3pm_oktas <dbl>, wind_direction_3pm <chr>,

# wind_speed_3pm_kph <dbl>, MSL_pressure_3pm_hPa <dbl>, and abbreviated

# variable names ¹min_temp_c, ²max_temp_c, ³rainfall_mm, ⁴evaporation_mm, …The only difference is that when you print it out, it tells you that it’s grouped by file. However, a grouped data frame interacts differently with other dplyr verbs, such as summary.

R

weather %>%

group_by(file) %>%

summarise(median_min_temp = median(min_temp_c),

median_max_temp = median(max_temp_c, na.rm=TRUE))

OUTPUT

# A tibble: 2 × 3

file media…¹ media…²

<chr> <dbl> <dbl>

1 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/main… 16.7 27.7

2 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/main… 15.4 24.2

# … with abbreviated variable names ¹median_min_temp, ²median_max_tempNow we get the median temperature for both Sydney and Brisbane.

R

weather %>%

group_by(file, dir_max_wind_gust) %>%

summarise(max_max_wind_gust = max(speed_max_wind_gust_kph))

OUTPUT

`summarise()` has grouped output by 'file'. You can override using the

`.groups` argument.OUTPUT

# A tibble: 24 × 3

# Groups: file [2]

file dir_m…¹ max_m…²

<chr> <chr> <dbl>

1 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… E 35

2 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… ENE 37

3 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… ESE 35

4 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… NE 26

5 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… NNE 35

6 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… NW 48

7 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… S 39

8 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… W 44

9 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… WNW 33

10 https://raw.githubusercontent.com/szsctt/cmri_R_workshop/mai… WSW 43

# … with 14 more rows, and abbreviated variable names ¹dir_max_wind_gust,

# ²max_max_wind_gustR

weather %>%

group_by(file, dir_max_wind_gust) %>%

summarise(max_max_wind_gust = max(speed_max_wind_gust_kph)) %>%

ungroup() %>%

pivot_wider(id_cols=file, names_from = "dir_max_wind_gust", values_from = "max_max_wind_gust")

OUTPUT

`summarise()` has grouped output by 'file'. You can override using the

`.groups` argument.OUTPUT

# A tibble: 2 × 16

file E ENE ESE NE NNE NW S W WNW WSW `NA` NNW

<chr> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl> <dbl>

1 https… 35 37 35 26 35 48 39 44 33 43 NA NA

2 https… 33 50 26 41 NA NA 54 83 69 54 NA 46

# … with 3 more variables: SSE <dbl>, SSW <dbl>, SW <dbl>Do you prefer the long or wide form of the table?

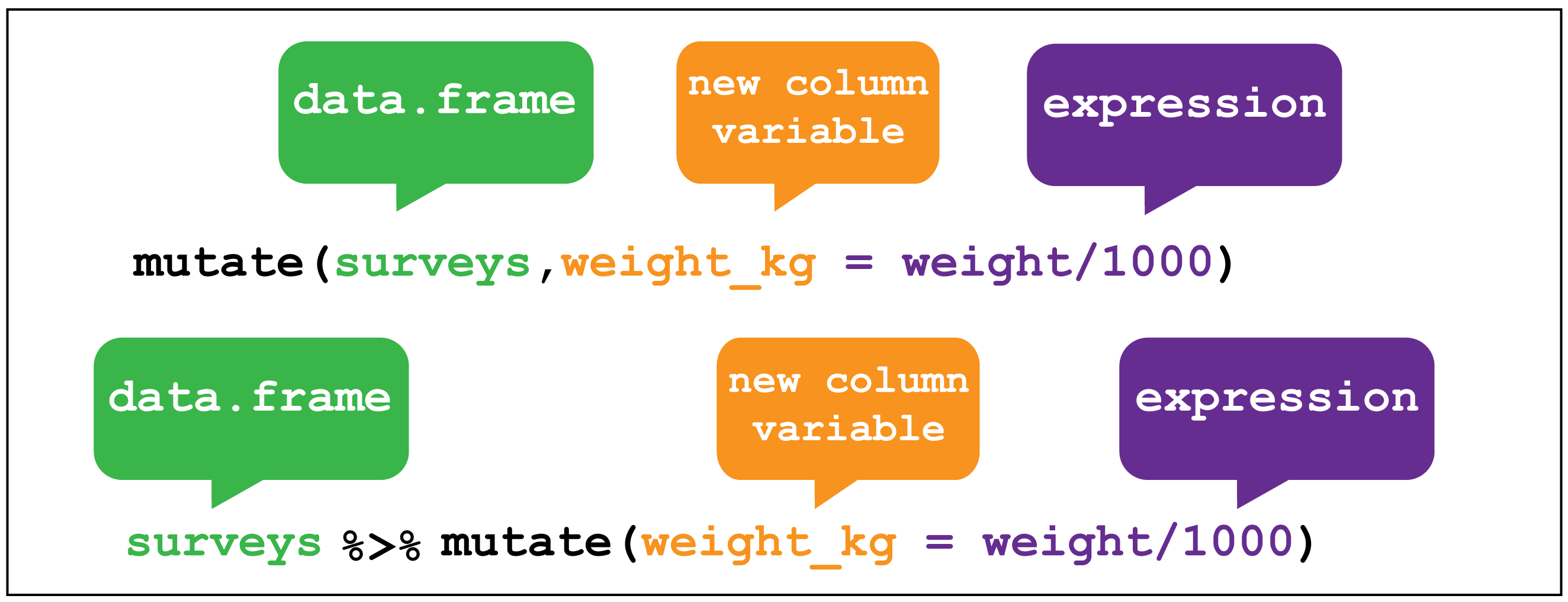

Creating new columns with mutate()

It’s kind of annoying that we have the whole file names, rather than just the names of the cities. To fix this, we can create (or overwrite) columns with mutate().

dplyr::mutate() image creditFor example, the stringr::str_extract() function extracts a matching pattern from a string:

R

stringr::str_extract(data_files, "sydney|brisbane")

OUTPUT

[1] "sydney" "brisbane"Regular expressions for pattern matching in strings

The second argument to str_extract() is a regular expression, or regex. Using regular expressions is a hugely flexible way to specify a pattern to match in a string, but it’s a somewhat complicated topic that I won’t go into here. If you’re interested in learning more, you can look at the stringr documentation on regular expressions.

We can use mutate() to apply the str_extract() function to the file column

R

weather <- weather %>%

mutate(city = stringr::str_extract(file, "sydney|brisbane"))

weather

OUTPUT

# A tibble: 57 × 23

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-01 18.2 24 0.2 4.6 9.5 WNW 69

2 https://r… 2022-11-02 11.1 20.5 0.6 13 12.8 W 67

3 https://r… 2022-11-03 11.1 22 0 7.8 8.9 W 56

4 https://r… 2022-11-04 13.4 23.1 1 6 5.7 SSE 26

5 https://r… 2022-11-05 13.4 23.6 0.2 4.4 11.8 ENE 37

6 https://r… 2022-11-06 13.3 24 0 4 12.1 ENE 39

7 https://r… 2022-11-07 15.4 24.2 0 9.8 12.3 NE 41

8 https://r… 2022-11-08 16 24.2 1.2 8 11 ENE 35

9 https://r… 2022-11-09 14.9 24.2 0.2 8 10.3 E 33

10 https://r… 2022-11-10 14.9 24.4 0 7.8 9.3 ENE 43

# … with 47 more rows, 14 more variables: time_max_wind_gust <time>,

# temp_9am_c <dbl>, rel_humid_9am_pc <int>, cloud_amount_9am_oktas <dbl>,

# wind_direction_9am <chr>, wind_speed_9am_kph <dbl>,

# MSL_pressure_9am_hPa <dbl>, temp_3pm_c <dbl>, rel_humid_3pm_pc <dbl>,

# cloud_amount_3pm_oktas <dbl>, wind_direction_3pm <chr>,

# wind_speed_3pm_kph <dbl>, MSL_pressure_3pm_hPa <dbl>, city <chr>, and

# abbreviated variable names ¹min_temp_c, ²max_temp_c, ³rainfall_mm, …Now if we repeat the same summary as before, we get an output that’s a bit easier to read.

R

weather %>%

group_by(city) %>%

summarise(median_min_temp = median(min_temp_c),

median_max_temp = median(max_temp_c, na.rm=TRUE))

OUTPUT

# A tibble: 2 × 3

city median_min_temp median_max_temp

<chr> <dbl> <dbl>

1 brisbane 16.7 27.7

2 sydney 15.4 24.2Mutating multiple columns with across()

Let’s imagine that the calibration was wrong for both temperature sensors, and all the temperature measurements are out by 1°C. We’d like to add 1 to each of the temperature measurements. There are multiple columns that contain temperatures, so we could do this:

R

weather %>%

mutate(min_temp_c = min_temp_c + 1,

max_temp_c = max_temp_c + 1,

temp_9am_c = temp_9am_c + 1,

temp_3pm_c = temp_3pm_c + 1)

OUTPUT

# A tibble: 57 × 23

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-01 19.2 25 0.2 4.6 9.5 WNW 69

2 https://r… 2022-11-02 12.1 21.5 0.6 13 12.8 W 67

3 https://r… 2022-11-03 12.1 23 0 7.8 8.9 W 56

4 https://r… 2022-11-04 14.4 24.1 1 6 5.7 SSE 26

5 https://r… 2022-11-05 14.4 24.6 0.2 4.4 11.8 ENE 37

6 https://r… 2022-11-06 14.3 25 0 4 12.1 ENE 39

7 https://r… 2022-11-07 16.4 25.2 0 9.8 12.3 NE 41

8 https://r… 2022-11-08 17 25.2 1.2 8 11 ENE 35

9 https://r… 2022-11-09 15.9 25.2 0.2 8 10.3 E 33

10 https://r… 2022-11-10 15.9 25.4 0 7.8 9.3 ENE 43

# … with 47 more rows, 14 more variables: time_max_wind_gust <time>,

# temp_9am_c <dbl>, rel_humid_9am_pc <int>, cloud_amount_9am_oktas <dbl>,

# wind_direction_9am <chr>, wind_speed_9am_kph <dbl>,

# MSL_pressure_9am_hPa <dbl>, temp_3pm_c <dbl>, rel_humid_3pm_pc <dbl>,

# cloud_amount_3pm_oktas <dbl>, wind_direction_3pm <chr>,

# wind_speed_3pm_kph <dbl>, MSL_pressure_3pm_hPa <dbl>, city <chr>, and

# abbreviated variable names ¹min_temp_c, ²max_temp_c, ³rainfall_mm, …But it’s a bit annoying to type out each column. Instead, we can use across() inside mutate() to apply the same transformation to all columns whose name contains the string “temp”. The syntax is a little complicated, so don’t worry if you don’t get it straight away. We use the contains() function to get the columns we want (by matching the regex “temp”), and then to each of these columns we add one - the . will be replaced by the name of each column when the expression is evaluated.

R

weather %>%

mutate(across(contains("temp"), ~.+1))

OUTPUT

# A tibble: 57 × 23

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-01 19.2 25 0.2 4.6 9.5 WNW 69

2 https://r… 2022-11-02 12.1 21.5 0.6 13 12.8 W 67

3 https://r… 2022-11-03 12.1 23 0 7.8 8.9 W 56

4 https://r… 2022-11-04 14.4 24.1 1 6 5.7 SSE 26

5 https://r… 2022-11-05 14.4 24.6 0.2 4.4 11.8 ENE 37

6 https://r… 2022-11-06 14.3 25 0 4 12.1 ENE 39

7 https://r… 2022-11-07 16.4 25.2 0 9.8 12.3 NE 41

8 https://r… 2022-11-08 17 25.2 1.2 8 11 ENE 35

9 https://r… 2022-11-09 15.9 25.2 0.2 8 10.3 E 33

10 https://r… 2022-11-10 15.9 25.4 0 7.8 9.3 ENE 43

# … with 47 more rows, 14 more variables: time_max_wind_gust <time>,

# temp_9am_c <dbl>, rel_humid_9am_pc <int>, cloud_amount_9am_oktas <dbl>,

# wind_direction_9am <chr>, wind_speed_9am_kph <dbl>,

# MSL_pressure_9am_hPa <dbl>, temp_3pm_c <dbl>, rel_humid_3pm_pc <dbl>,

# cloud_amount_3pm_oktas <dbl>, wind_direction_3pm <chr>,

# wind_speed_3pm_kph <dbl>, MSL_pressure_3pm_hPa <dbl>, city <chr>, and

# abbreviated variable names ¹min_temp_c, ²max_temp_c, ³rainfall_mm, …Equivalently, we could also use a function to make the transformation.

R

weather %>%

mutate(across(contains("temp"), function(x){

return(x+1)

}))

OUTPUT

# A tibble: 57 × 23

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-01 19.2 25 0.2 4.6 9.5 WNW 69

2 https://r… 2022-11-02 12.1 21.5 0.6 13 12.8 W 67

3 https://r… 2022-11-03 12.1 23 0 7.8 8.9 W 56

4 https://r… 2022-11-04 14.4 24.1 1 6 5.7 SSE 26

5 https://r… 2022-11-05 14.4 24.6 0.2 4.4 11.8 ENE 37

6 https://r… 2022-11-06 14.3 25 0 4 12.1 ENE 39

7 https://r… 2022-11-07 16.4 25.2 0 9.8 12.3 NE 41

8 https://r… 2022-11-08 17 25.2 1.2 8 11 ENE 35

9 https://r… 2022-11-09 15.9 25.2 0.2 8 10.3 E 33

10 https://r… 2022-11-10 15.9 25.4 0 7.8 9.3 ENE 43

# … with 47 more rows, 14 more variables: time_max_wind_gust <time>,

# temp_9am_c <dbl>, rel_humid_9am_pc <int>, cloud_amount_9am_oktas <dbl>,

# wind_direction_9am <chr>, wind_speed_9am_kph <dbl>,

# MSL_pressure_9am_hPa <dbl>, temp_3pm_c <dbl>, rel_humid_3pm_pc <dbl>,

# cloud_amount_3pm_oktas <dbl>, wind_direction_3pm <chr>,

# wind_speed_3pm_kph <dbl>, MSL_pressure_3pm_hPa <dbl>, city <chr>, and

# abbreviated variable names ¹min_temp_c, ²max_temp_c, ³rainfall_mm, …Filtering rows with filter()

Let’s say that now we want information about days that were cloudy - for example, those where there were fewer than 10 sunshine hours. We can use filter() to get only those rows.

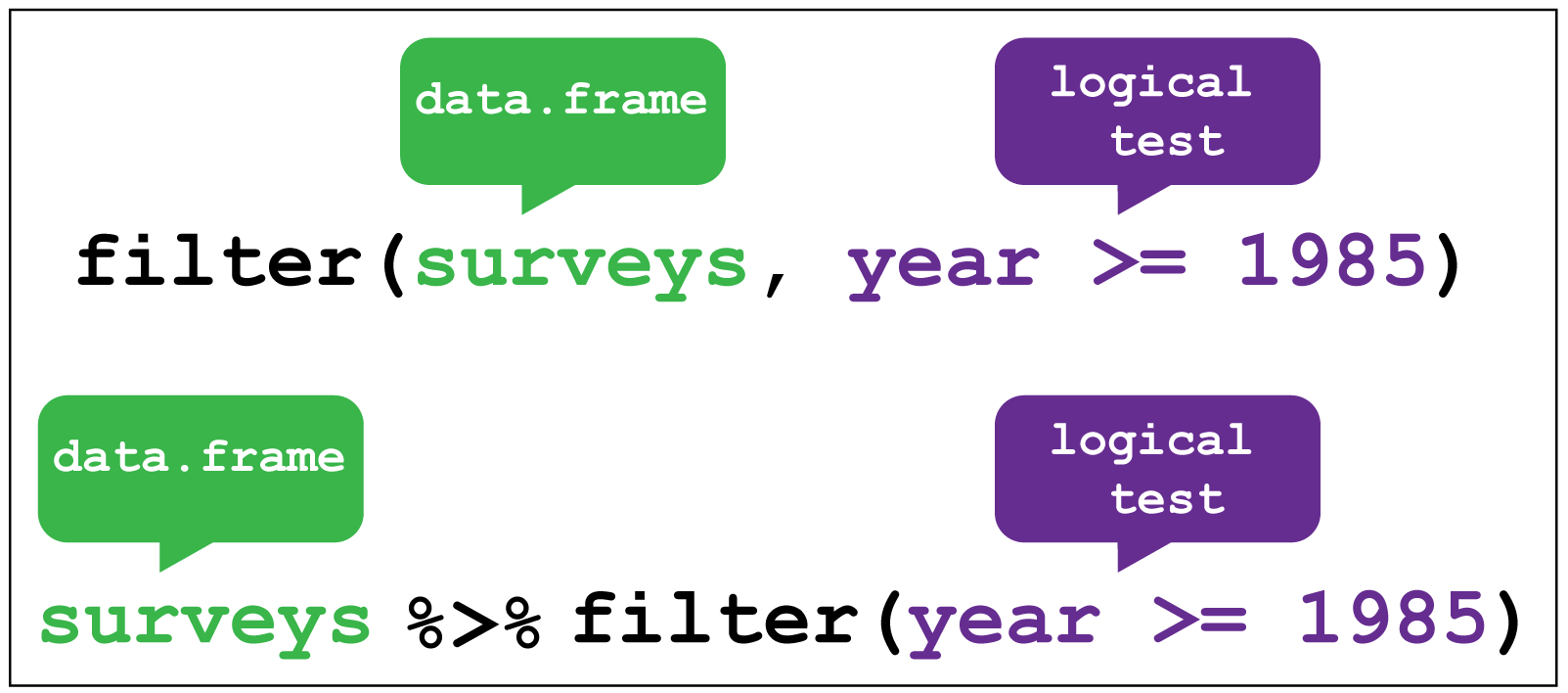

dplyr::filter() image creditR

weather %>%

filter(sunshine_hours < 10)

OUTPUT

# A tibble: 19 × 23

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-01 18.2 24 0.2 4.6 9.5 WNW 69

2 https://r… 2022-11-03 11.1 22 0 7.8 8.9 W 56

3 https://r… 2022-11-04 13.4 23.1 1 6 5.7 SSE 26

4 https://r… 2022-11-10 14.9 24.4 0 7.8 9.3 ENE 43

5 https://r… 2022-11-11 14.9 24.9 0 7.8 9.1 ENE 39

6 https://r… 2022-11-12 16 27.9 0 7.8 9.5 ESE 26

7 https://r… 2022-11-13 18.9 25.6 0 6.4 1.7 NNW 46

8 https://r… 2022-11-15 16.2 24.9 0.2 9.6 9.2 SW 33

9 https://r… 2022-11-16 13 21.6 0.8 8 9.1 S 54

10 https://r… 2022-11-27 17 26.8 0 8 3.7 WSW 54

11 https://r… 2022-11-28 17.1 23.8 8.8 3.8 8.9 SSW 31

12 https://r… 2022-11-08 14.7 25.2 0 10 9.9 ESE 33

13 https://r… 2022-11-14 20.6 28.8 0 9.8 8.4 ENE 26

14 https://r… 2022-11-20 20.8 34.5 0 8.8 5.7 NW 48

15 https://r… 2022-11-22 20.6 30.7 0 11.6 9.2 ENE 28

16 https://r… 2022-11-23 18.4 27.6 0 8.8 4.4 WNW 22

17 https://r… 2022-11-24 16.9 28.6 0 8 2.7 NE 20

18 https://r… 2022-11-27 19.8 29.4 0 8.8 6.1 NW 24

19 https://r… 2022-11-28 21.1 31 21.4 5.4 6.1 S 39

# … with 14 more variables: time_max_wind_gust <time>, temp_9am_c <dbl>,

# rel_humid_9am_pc <int>, cloud_amount_9am_oktas <dbl>,

# wind_direction_9am <chr>, wind_speed_9am_kph <dbl>,

# MSL_pressure_9am_hPa <dbl>, temp_3pm_c <dbl>, rel_humid_3pm_pc <dbl>,

# cloud_amount_3pm_oktas <dbl>, wind_direction_3pm <chr>,

# wind_speed_3pm_kph <dbl>, MSL_pressure_3pm_hPa <dbl>, city <chr>, and

# abbreviated variable names ¹min_temp_c, ²max_temp_c, ³rainfall_mm, …R

weather %>%

filter(sunshine_hours > 10) %>%

group_by(city) %>%

summarise(n_days = n())

OUTPUT

# A tibble: 2 × 2

city n_days

<chr> <int>

1 brisbane 19

2 sydney 17There were more days with more than 10 hours of sunlight in Brisbane than in Sydney. I’ll let you draw your own conclusions.

Complex filters with case_when()

Let’s say we wanted to keep only the rows from sunny, warm days and cloudy, cold days. We could do this just with one logical expression.

R

weather %>%

filter((sunshine_hours > 10 & max_temp_c > 25) | (sunshine_hours < 10 & max_temp_c < 15))

OUTPUT

# A tibble: 19 × 23

file date min_t…¹ max_t…² rainf…³ evapo…⁴ sunsh…⁵ dir_m…⁶ speed…⁷

<chr> <date> <dbl> <dbl> <dbl> <dbl> <dbl> <chr> <dbl>

1 https://r… 2022-11-14 18.1 27.9 37.6 4.2 10.8 W 67

2 https://r… 2022-11-20 18 27.4 0.8 7.8 13.3 W 70

3 https://r… 2022-11-23 13.7 28.3 0 8 12.4 W 54

4 https://r… 2022-11-24 15.8 25.4 0 11.2 12 E 33

5 https://r… 2022-11-05 15.5 25.1 0 8.6 12.2 E 30

6 https://r… 2022-11-06 15.6 25.6 0 8.2 12.7 E 31

7 https://r… 2022-11-07 15.5 25.7 0 7.4 12.6 ENE 37

8 https://r… 2022-11-09 14.7 25.3 0 8.4 12.4 ESE 30

9 https://r… 2022-11-10 15.9 25.7 0 8.6 12.5 E 35

10 https://r… 2022-11-11 14.4 26.2 0 9.4 12.1 E 22

11 https://r… 2022-11-12 15.8 28.4 0 7.4 10.5 NE 26

12 https://r… 2022-11-13 17.6 27.7 0 7.2 12.4 NNE 33

13 https://r… 2022-11-15 20 33.3 0 5.6 12.9 WNW 30

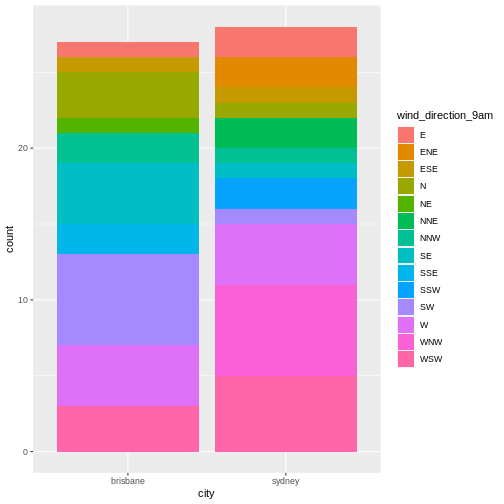

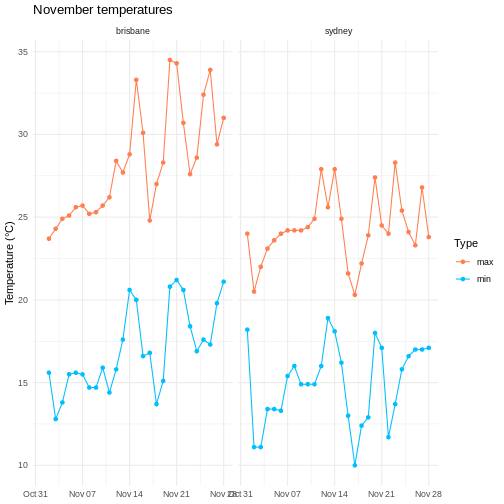

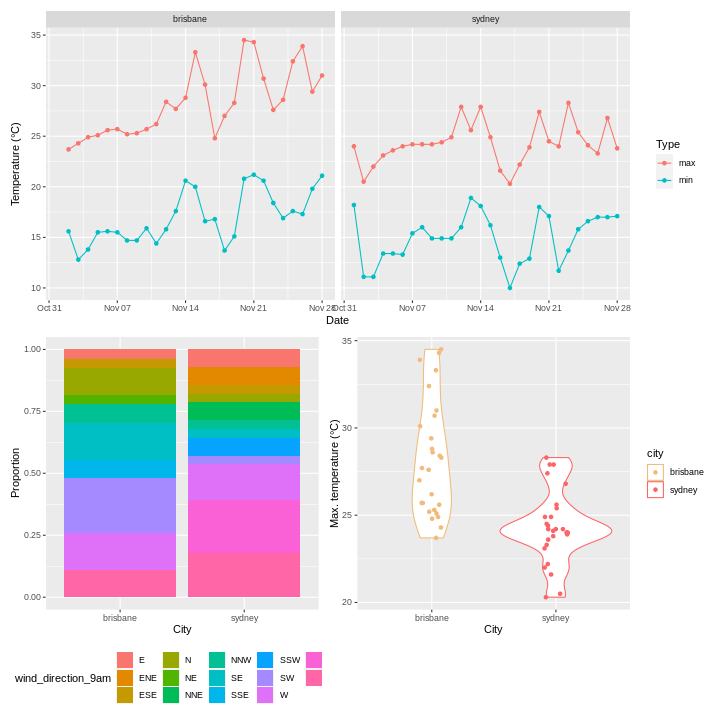

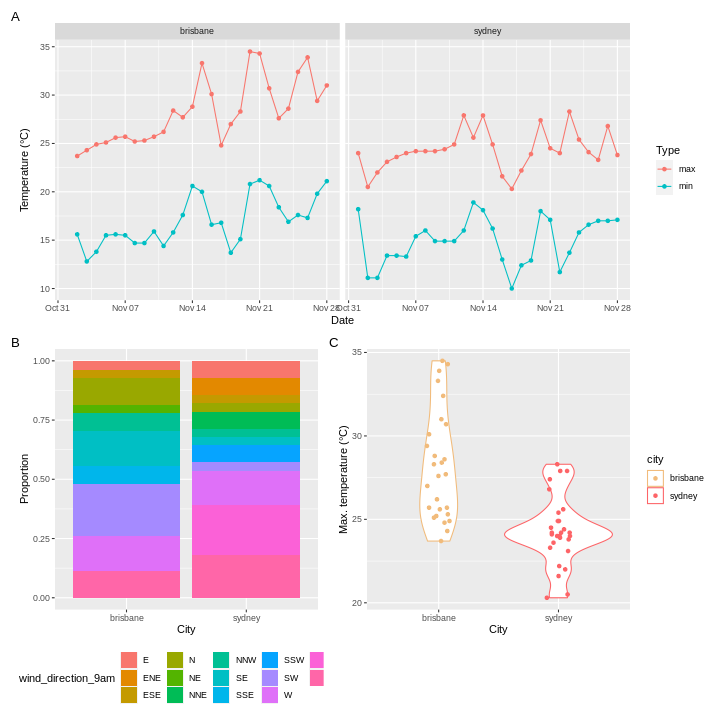

14 https://r… 2022-11-16 16.6 30.1 0 8.2 12.5 WSW 43